Introduction

Neo banks and FinTechs are scaling faster than any other sector in finance — yet their compliance teams remain a fraction of the size found at traditional banks. This structural gap between growth velocity and risk exposure is no longer a temporary startup problem. It's a permanent architecture challenge that regulators are now actively closing.

Consider the warning signs: the UK's FCA issued fines exceeding £124 million in 2025, with many penalties tied to AML failures at high-growth FinTechs. Starling Bank was fined £29 million after growing from 43,000 to 3.6 million customers without scaling its financial crime controls. The CFPB has issued explicit guidance that algorithmic complexity offers no relief from ECOA adverse action requirements. The regulatory arbitrage window has closed.

AI-powered risk management is the most viable path forward, but implementation determines whether it reduces exposure or creates new liability. What follows covers the risk landscape specific to FinTechs, the AI use cases with proven ROI, the governance pitfalls regulators are watching, and what a defensible compliance framework actually looks like in practice.

TLDR

- Neo banks face compounding risk: rapid user scaling, thin compliance teams, and tighter scrutiny from CFPB, OCC, FCA, and international regulators

- AI automates fraud detection, AML transaction monitoring, and regulatory reporting — cutting the cost and latency of manual compliance review

- The most dangerous risks are internal — biased training data, silent model drift, and opaque decision logic that can't survive a regulatory audit

- Effective governance requires explainability built into model design, structured human oversight for high-stakes decisions, continuous monitoring, and clear accountability ownership

Why Neo Banks and FinTech Face a Unique Risk Landscape

Neo banks and FinTechs face the same regulatory obligations as traditional banks — BSA/AML, KYC, fair lending, sanctions screening — but operate with compliance teams that are vastly smaller relative to their transaction volumes and customer growth rates. This structural gap doesn't shrink as companies scale — it widens, making a technology-first compliance architecture a necessity, not an option.

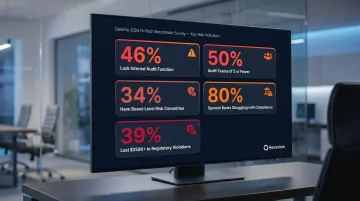

Deloitte's 2024 FinTech Benchmark Survey of 100 companies found that the compliance gaps across the sector run deep:

- 46% of early-stage fintechs have no internal audit function at all

- 50% of those with audit teams employ five or fewer people

- Only 34% have a dedicated risk committee at the board level

- 80% of sponsor banks struggle to meet compliance requirements

- 39% have lost at least $250,000 due to compliance violations

Growth velocity creates exponentially compounding risk exposure. A FinTech onboarding tens of thousands of customers per week generates more fraud vectors, compliance events, and reporting obligations than a slower-growing institution. Starling Bank's £29 million fine illustrates this perfectly: the bank's automated sanctions screening system covered only a fraction of the UK financial sanctions list while it opened over 54,000 high-risk accounts between September 2021 and November 2023. The FCA called the controls "shockingly lax."

The regulatory arbitrage window that allowed early FinTechs to operate in gray zones is closing. Post-2020 enforcement actions against major neo banks, the EU's AI Act classifying credit scoring AI as high-risk, and new OCC/CFPB guidance on algorithmic decision-making all signal that building fast and complying later is no longer viable. FinTechs must architect risk management into their growth stack from the beginning.

That tightening extends to new entrants as well. The OCC conditionally approved five new national trust bank charters in December 2025, including digital-first entities, confirming that regulators are applying full traditional banking standards to FinTech business models at scale.

How AI Is Transforming Core Risk Management for FinTech

Credit Risk Assessment

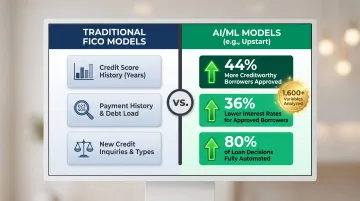

ML models go beyond FICO scores by analyzing behavioral data, transaction patterns, repayment history, and alternative data sources such as device usage and app engagement patterns. This enables FinTechs to extend credit responsibly to thin-file and underbanked customers while maintaining risk controls, giving them a meaningful edge over traditional lenders.

Approximately 32 million American adults are "unscoreable" according to the Federal Reserve, with 7 million credit invisible and 25 million carrying thin files. FinRegLab's July 2025 research found that ML models "substantially outperformed" traditional logistic regression methods across all data types.

The two strongest ML models increased credit approvals by approximately 4% at risk cutoffs common to mainstream lenders. Extrapolated to 2023 market data, that translates to roughly 2 million additional credit card accounts and 152,000 additional mortgages.

Upstart uses 1,600+ variables in its ML credit model and reports 44% more creditworthy borrowers approved compared to FICO-based models, with 36% lower interest rates for approved borrowers and 80% of loan decisions fully automated.

Fraud Detection and Transaction Monitoring

AI-powered anomaly detection processes thousands of transactions per second and flags suspicious patterns in real time. Rule-based systems can't match this: they require manual threshold updates and miss fast-moving fraud vectors. Adaptive ML models continuously learn from new fraud patterns, reducing false positive rates while improving detection coverage.

PayPal's ML fraud system demonstrates this at scale. According to PayPal's 2024 10-K filing, the company's transaction loss rate declined from 0.09% in 2022 to 0.07% in 2024, even as total payment volume grew to $1.68 trillion and transaction count reached 26.3 billion annually.

To support that scale, PayPal processes approximately 60 billion database queries per day across its risk infrastructure, with roughly 75% of calls completed under 50 milliseconds.

AML and Financial Crime Analytics

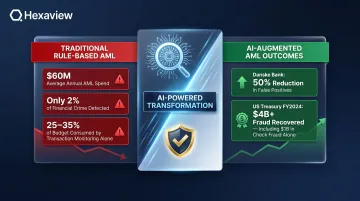

Traditional rule-based AML systems generate very high false positive alert rates that overwhelm compliance teams and create operational bottlenecks. AI-based transaction monitoring contextualizes individual transactions against full customer behavioral profiles, peer group benchmarks, and known typologies, which reduces noise substantially while improving detection of genuine suspicious activity.

Financial institutions spend an average of $60 million annually on AML compliance, with transaction monitoring consuming 25-35% of that budget. Yet the industry detects only about 2% of global financial crime flows, despite spending increases of up to 10% per year in some markets.

AI-driven systems address this gap directly. Danske Bank achieved a 50% false-positive reduction in fraud alerts using AI. The US Treasury's own ML systems recovered over $4 billion in fraud and improper payments in Fiscal Year 2024, including $1 billion in check fraud recovery.

NLP for Regulatory and Market Risk Signals

Natural Language Processing enables FinTechs to monitor regulatory filings, news, earnings data, and financial forums in real time, providing early-warning signals for market shifts, emerging enforcement trends, or sector-level liquidity stress. Instead of responding to regulatory changes after the fact, compliance teams get advance visibility before exposure widens.

NLP systems can handle tasks that would otherwise require large compliance teams:

- Parse thousands of regulatory updates across jurisdictions automatically

- Map new requirements to internal policies and flag gaps

- Track enforcement trends across agencies (SEC, CFPB, OCC, state regulators)

- Monitor financial news and filings for early liquidity or counterparty risk signals

This matters most for FinTechs operating across state lines or internationally, where regulatory requirements shift frequently and silently.

Cyber Risk Detection

AI-powered behavioral analysis identifies anomalous internal and external access patterns before they escalate into breaches. Traditional signature-based security only catches known threats — novel attack vectors slip through undetected.

For FinTechs handling sensitive financial data, cyber risk spans operational, reputational, and compliance exposure at once. AI-driven systems monitor user behavior patterns, detect privilege escalation attempts, and flag unusual data access or exfiltration in real time. A single undetected breach can trigger regulatory scrutiny, customer attrition, and remediation costs that dwarf the investment in prevention.

AI-Driven Compliance Automation: From Manual Burden to Real-Time Control

KYC and Automated Customer Onboarding

AI automates document validation, identity verification, and dynamic risk scoring during onboarding — enabling FinTechs to onboard customers at digital scale while maintaining compliance with KYC and CDD requirements.

Banks spend an average of $60 million annually on KYC compliance and take an average of 48 days to onboard a new business client, contacting them an average of 8 times during the process. 89% of corporate treasurers report a "bad experience" with the KYC process, and 13% have changed banks because of it.

Manual KYC processes cost $50–100 per customer. AI-automated systems cut both processing time and cost substantially while improving accuracy and customer experience.

RegTech and Multi-Jurisdictional Compliance

Reducing onboarding friction is only part of the compliance equation. For FinTechs operating across state lines or internationally, keeping pace with regulatory change is an equally demanding problem.

ML and NLP can parse regulatory updates across jurisdictions, map new requirements to internal policies, and surface compliance gaps automatically — work that manual tracking handles slowly and inconsistently.

The EU AI Act classifies credit scoring and creditworthiness assessment AI as high-risk under Annex III, while explicitly exempting fraud detection. FinTechs operating in multiple jurisdictions must maintain different governance standards for different use cases of the same underlying technology.

Sanctions Screening and Risk Scoring

Contextual AI reduces the false positive problem in OFAC and sanctions screening by applying entity resolution and contextual intelligence to names, geographies, and transaction relationships — cutting the volume of manual reviews needed while preserving regulatory defensibility.

Starling Bank's £29 million fine demonstrates the cost of getting sanctions screening wrong. The bank's automated system failed to screen against approximately 54,000 entries on the UK sanctions list, allowing it to open high-risk accounts in breach of regulatory requirements.

Modern AI sanctions screening combines name-matching algorithms, contextual analysis, and behavioral profiling to improve accuracy. Descartes Visual Compliance, for instance, demonstrated a 20%+ reduction in false positive rates using AI-assisted screening.

For neo banks building these pipelines from scratch, implementation approach matters as much as the underlying technology. Hexaview Technologies (SOC 2 Type 2 certified) helps FinTechs design and deploy compliance pipelines that are auditable and built to meet regulatory standards at production scale.

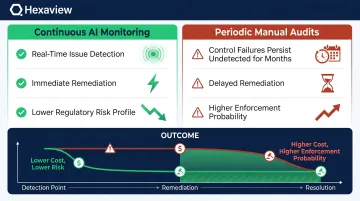

Continuous Compliance Monitoring

AI enables a fundamental shift in how compliance is monitored. Instead of point-in-time audits, teams get real-time detection of policy violations, anomalous data patterns, and control failures — before they surface in an enforcement action.

The difference in risk exposure is significant:

- Continuous AI monitoring: Issues flagged in real time, remediated before regulatory impact

- Periodic manual audits: Control failures can persist undetected for months

- Outcome: Earlier detection directly reduces the probability of enforcement and the cost of remediation

A FinTech that deploys continuous compliance monitoring isn't just more efficient — it's operating with a materially lower regulatory risk profile.

The Real Risks of Deploying AI in Risk Systems

The "black box" problem creates serious regulatory exposure. Advanced ML models can achieve high accuracy while offering no interpretable reasoning for individual decisions — but explanation is legally required in contexts like adverse action notices under ECOA, or model documentation under Basel and regulatory guidelines.

CFPB Circular 2022-03 states explicitly that creditors cannot use black-box models when doing so prevents providing specific and accurate reasons for adverse actions. "A creditor's lack of understanding of its own methods is therefore not a cognizable defense against liability."

Explainable AI tools such as SHAP values and feature importance rankings are transitioning from optional to baseline requirements for risk-facing AI systems.

Data quality and silent model drift pose an equally serious threat. AI models are only as reliable as their training data: biased or incomplete historical data can encode discriminatory patterns into lending or fraud decisions without any visible signal. Model drift compounds this — when the statistical relationship between input features and outcomes shifts over time, previously well-performing models degrade quietly, often before any alert fires.

The Apple Card investigation illustrates this risk. In November 2019, Steve Wozniak publicly reported that the algorithm gave him 10x the credit limit of his wife despite shared finances. The NYDFS investigation found no fair lending violations, but stressed the need for ongoing algorithmic auditing.

Scale transforms individual errors into systemic ones. Unlike rule-based systems where a flaw affects one transaction at a time, a miscalibrated AI model can replicate the same mistake across millions of decisions before anyone detects a pattern.

Addressing these risks requires more than periodic model reviews. FinTechs need:

- Real-time performance monitoring dashboards tracking accuracy, fairness metrics, and score distributions

- Circuit-breaker protocols that halt model outputs automatically when anomalous behavior is detected

- Scheduled retraining pipelines triggered by drift thresholds, not calendar dates

- Audit trails linking every decision to the model version and feature set used

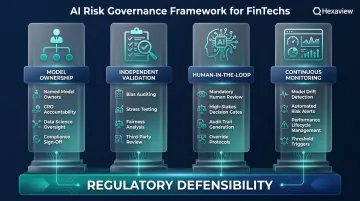

Building an AI Risk Governance Framework That Works

Model Ownership and Accountability Structure

Every AI model in the risk stack must have a named owner accountable for its accuracy, fairness, regulatory defensibility, and ongoing monitoring. Structured governance handoffs between the CRO, data science teams, and compliance functions need to be documented and enforced — not left as shared responsibility that falls to no one.

Governance frameworks should clearly define:

- Who owns model performance and retraining decisions

- Who validates model outputs before deployment

- Who responds when model performance degrades

- Who interfaces with regulators during examinations

Independent Model Validation and Bias Auditing

A rigorous model validation program for FinTech AI systems includes:

- In-sample/out-of-sample performance testing

- Fairness and disparate impact analysis

- Adversarial stress testing for unusual market conditions

- Documentation standards for regulatory review

The OCC, Federal Reserve, and FDIC issued revised Model Risk Management guidance on April 17, 2026, superseding SR 11-7. Key changes include a narrower definition of "model," explicit exclusion of generative and agentic AI from scope, adoption of a $30 billion asset threshold for proportionality, and a shift toward principles-based rather than prescriptive practices.

This guidance adapts traditional model risk management to ML-specific failure modes that legacy validation frameworks were not built to catch.

Human-in-the-Loop for High-Stakes Decisions

Governance frameworks must designate categories of decisions (large credit approvals, escalated fraud flags, sanctions hits, customer disputes) where human review of AI outputs is mandatory rather than optional.

This oversight layer builds institutional knowledge over time, creating feedback loops that improve model performance and regulatory defensibility together. It also produces the audit trail regulators expect when examining high-stakes decision systems.

FinTechs building this infrastructure without established risk management departments often move faster by partnering with a specialized AI engineering firm. Hexaview Technologies has over a decade of capital markets and FinTech AI experience, helping clients design risk systems with built-in auditability, explainability, and compliance controls.

Continuous Monitoring and Lifecycle Management

A production-grade AI monitoring program includes:

- Tracks accuracy, drift metrics, and fairness measures in real time

- Triggers automated alerts and escalation procedures when performance degrades

- Schedules governance reviews that treat model performance as a continuous risk, not a one-time deployment decision

AI risk does not end at model launch. New data, shifting user behavior, and changing market conditions introduce drift over time, which is why ongoing investment in monitoring infrastructure is non-negotiable.

The EU AI Act's Article 26 explicitly requires deployers of high-risk AI systems to monitor for drift and maintain performance over the system's lifecycle, aligning regulatory expectations with operational best practices.

Frequently Asked Questions

How does AI improve risk management in banks?

AI improves risk management by enabling real-time processing of large datasets for credit scoring, fraud detection, AML monitoring, and cyber risk identification. It delivers higher predictive accuracy, faster response times, and lower operational costs than traditional rule-based systems.

How is AI impacting the banking industry?

AI is transforming credit decisioning, compliance automation, customer onboarding, and operational efficiency across both traditional banks and neo banks. It also introduces new governance and model risk challenges that institutions must actively manage through structured oversight and continuous monitoring.

What is the US Treasury AI risk management framework?

The US Treasury released its Financial Services AI Risk Management Framework in February 2026, including 230 control objectives for financial institutions. It emphasizes model transparency, accountability, data governance, and human oversight in high-stakes AI-driven financial decisions.

What are the 5 C's of risk management?

The 5 C's traditionally refer to Character, Capacity, Capital, Collateral, and Conditions in lending-specific risk assessment. AI augments rather than replaces this structured framework by providing data-driven insights into each dimension while maintaining human oversight for final credit decisions.

What compliance challenges are unique to neo banks and FinTech companies?

Neo banks face the same regulatory obligations as traditional banks — KYC, AML, fair lending, sanctions — but with smaller compliance teams, faster growth rates, and less institutional compliance infrastructure. This makes AI-powered automation not just an efficiency play but a structural necessity to maintain regulatory compliance at scale.

How do neo banks ensure AI models stay compliant with changing regulations?

Compliant AI governance requires continuous model monitoring for drift and bias, NLP-driven regulatory change tracking, regular independent model validation, and explainable AI documentation. These controls must satisfy evolving requirements from regulators including the CFPB, OCC, FCA, and international equivalents.