Introduction

Hedge funds and FinTech firms face a fundamental tension: the AI models driving their most critical decisions—credit risk assessments, fraud detection, portfolio allocation—are often impossible to explain to risk committees, regulators, or investors. A gradient boosting model may predict loan defaults with 93% accuracy, yet when a credit decision is challenged or a regulatory audit begins, the institution cannot articulate why that specific borrower was flagged. This opacity is no longer just a technical limitation—it has become a systemic risk.

That systemic risk is accelerating. Algorithmic decision-making in financial services has consistently outpaced the industry's ability to audit or justify those decisions. The Financial Stability Board has flagged this directly: "the lack of interpretability or 'auditability' of AI and machine learning methods could become a macro-level risk." A widespread use of opaque models, it warns, "may result in unintended consequences."

When a model-driven drawdown cannot be explained to limited partners, or when a fraud alert lacks a transparent rationale, institutional credibility erodes—regardless of performance.

TL;DR

- Opaque AI creates regulatory, operational, and reputational risk in finance

- Explainable AI (XAI) matches black-box accuracy while keeping model logic auditable and defensible

- SHAP, LIME, and interpretable-by-design models enable auditable risk workflows

- GDPR, the EU AI Act, and supervisory guidance increasingly mandate or imply XAI

- Lasting compliance takes a phased roadmap — governance, tooling, and audit trails — not a single vendor solution

The Black-Box Problem: Why Opaque AI Is a Liability in Finance

Complex machine learning models—neural networks, XGBoost, deep ensembles—consistently outperform simpler alternatives in prediction tasks. But their internal logic cannot be easily traced, creating a critical flaw in regulated environments where every automated decision may face scrutiny.

That trade-off is not theoretical. Financial institutions that deploy opaque models face a compounding choice: accept degraded predictive performance, or carry the operational and regulatory exposure that comes with decisions no one can explain.

Black-box AI introduces specific failure modes in finance:

- Inability to explain credit denials – Regulatory frameworks like GDPR and the Equal Credit Opportunity Act require explainable adverse action notices

- Undetectable model bias – Hidden discrimination in lending or hiring can persist unnoticed without feature-level transparency

- Failure to catch distributional drift – Market regime changes may degrade model performance invisibly when internal logic is opaque

- No audit trail for algorithmic trading – Regulators and compliance officers cannot validate trading signals generated by unexplainable models

The Financial Stability Board's 2017 report on AI in financial services makes the systemic stakes explicit. Beyond the interpretability warning quoted above, the FSB notes that black-box concerns in credit scoring and trading contexts complicate supervision and auditability. More recently, the CFTC Technology Advisory Committee cited the 2012 Knight Capital incident as a cautionary example underscoring the need for rigorous oversight, documentation, and audit trails for automated trading systems.

For hedge funds specifically, the explainability burden extends beyond regulation. Limited partner trust and investor reporting requirements demand that model-driven drawdowns or risk flags be explained in plain language.

If a quantitative strategy triggers a stop-loss due to an AI-generated signal, and the portfolio manager cannot articulate the underlying drivers to the board or LPs, institutional credibility suffers—regardless of long-term alpha generation. Explainability, in that context, is not a compliance checkbox. It is a condition of investor confidence.

How XAI Fits into the Risk Management Workflow

XAI does not replace the predictive model; it augments it with an interpretive layer. This distinction matters because the explanatory step sits after prediction—meaning institutions retain the performance advantages of complex models while gaining the audit-readiness that regulators and LPs require. The typical financial risk pipeline integrates explainability as follows:

- Model training – Select and train the predictive model (XGBoost, neural network, deep ensemble) using historical financial data and validated feature sets

- Prediction output – Generate risk scores, fraud flags, or portfolio recommendations at scale, with confidence intervals where applicable

- Explanation layer – Apply SHAP values, LIME, or intrinsically interpretable models to decompose each prediction into its contributing features

- Human-in-the-loop review – Risk officers or compliance teams review feature attributions, challenge anomalous outputs, and validate decisions before action

- Decision logging – Record feature attributions, decision rationale, and reviewer sign-off to create defensible audit trails for regulators and LPs

This workflow enables what academics call "personalized" or instance-level explanations. Shapley values reveal which specific financial variables drove each individual firm's credit score, making explanations different for each entity rather than generic. One borrower may be flagged due to high leverage ratios, while another's risk score reflects industry-specific distress signals—and XAI makes those distinctions visible.

What Is Explainable AI and How Does It Work in Risk Management

Explainable AI (XAI) is a collection of methods and frameworks that make AI/ML model predictions interpretable. Models can be designed for transparency from the start, or explanation techniques can be layered onto existing black-box outputs after the fact. In financial risk management, XAI converts opaque model outputs into auditable decision logic that analysts, compliance officers, and regulators can actually follow.

Two dimensions of explainability matter in finance:

- Global explainability – Reveals which variables drive a model's behavior across all predictions. For example, a credit risk model might show that debt-to-income ratio consistently carries the most weight in default scoring.

- Local explainability – Isolates the factors behind a single prediction, such as why one company was flagged as a default risk due to deteriorating cash flow and sector headwinds.

- Regulatory explainability – Documents model logic in audit-ready formats, satisfying requirements under frameworks like SR 11-7 and the EU AI Act.

Post-hoc, model-agnostic explanation is particularly valuable for financial institutions. Methods like SHAP and LIME apply to any existing model's output without retraining, so financial teams can adopt XAI without rebuilding existing models. A hedge fund running an ensemble model for portfolio risk can layer SHAP explanations on top without disrupting production workflows.

Core XAI Techniques Used in Financial Risk Management

SHAP (SHapley Additive exPlanations)

SHAP is the most widely adopted XAI method in finance. It decomposes each model prediction into the additive contribution of individual input features, drawing on game-theory-derived Shapley values. SHAP satisfies three critical mathematical properties — local accuracy, missingness, and consistency — which makes it a theoretically sound choice for regulatory use:

- Local accuracy: The explanation matches the model's output for each individual prediction

- Missingness: Features absent from a prediction contribute zero to the explanation

- Consistency: If a model changes so that a feature matters more, its SHAP value never decreases

In hedge fund and FinTech risk contexts, SHAP identifies which balance-sheet ratios, market indicators, or behavioral data points drove a specific credit risk score, drawdown prediction, or fraud flag.

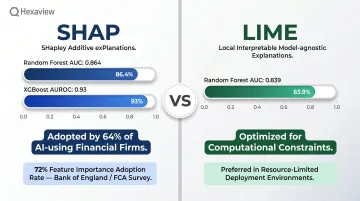

A benchmark study of 15,045 European SMEs compared logistic regression (AUROC 0.81) to XGBoost (AUROC 0.93), with SHAP applied to explain the stronger model's predictions. Institutions retained the accuracy advantage of complex ML without sacrificing transparency.

Adoption data underscores SHAP's dominance. A Bank of England and FCA survey found that 64% of financial firms using AI employ SHAP, with Feature Importance used by 72%.

Yet the same survey found that 46% of firms report only partial understanding of their AI technologies, often due to third-party reliance. That gap — between generating explanations and actually operationalizing them — remains one of the sector's most persistent challenges.

LIME and Other Model-Agnostic Methods

LIME (Local Interpretable Model-agnostic Explanations) offers an alternative approach: it builds a simpler, locally faithful approximation of the black-box model around a specific prediction. Originally introduced in 2016, LIME is useful when computational constraints make full SHAP computation impractical on large financial datasets.

A 2021 comparison study in credit risk contexts reported higher discriminative power for SHAP versus LIME — Random Forest models built on XAI weights achieved AUC 0.864 (SHAP) versus 0.839 (LIME). While both methods are model-agnostic, SHAP's theoretical grounding and stronger performance on tabular financial data have made it the preferred choice for high-stakes applications.

Intrinsically Interpretable Models as a Complementary Approach

Post-hoc methods like SHAP and LIME work well when accuracy is the priority. But not every financial risk application requires complex ML in the first place. Intrinsically interpretable models — logistic regression, decision trees, Generalized Additive Models (GAMs) — are explainable by design, with decision logic that is transparent from the start.

The trade-off: these models sacrifice predictive power in non-linear, high-dimensional financial datasets. A FinTech lender might choose GAMs for consumer credit scoring when regulatory scrutiny is highest and model transparency is non-negotiable. A hedge fund running a high-frequency trading strategy, by contrast, will likely prefer XGBoost with SHAP explanations to maximize alpha while maintaining auditability.

XAI in Action: Risk Use Cases for Hedge Funds and FinTech

Credit Risk and Default Prediction

XAI transforms credit risk scoring from a black-box probability into an auditable decision. QuickBooks Capital deployed an XGBoost model with SHAP explanations in July 2019, achieving a K-S statistic of 0.437 versus 0.410 for a traditional scorecard—roughly 7% improvement. The model was expected to cut loan default rates by 20%, while SHAP-generated Adverse Action codes ensured regulatory compliance.

The Bussmann et al. study of 15,045 SMEs demonstrated how SHAP enables grouping borrowers by risk drivers and providing individualized explanations supporting auditability. A borrower's default risk might stem from sector-specific stress or leverage ratios. SHAP surfaces those distinctions in a form both compliance teams and regulators can act on.

Market Risk, Portfolio Risk, and Fraud Detection

That same auditability extends across the broader risk stack. XAI is now embedded in three high-stakes use cases where opaque model outputs create regulatory and operational exposure:

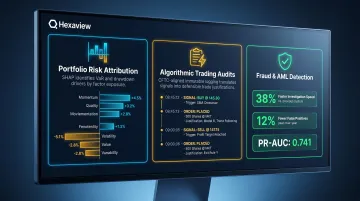

Portfolio risk attribution: SHAP identifies which factor exposures or positions are driving VaR or drawdown risk at a given moment. CFA Institute research documents feature attribution and visual explainability for investment decision support—though real-time attribution at scale remains an open operational challenge.

Algorithmic trading audits: The CFTC TAC report recommends immutable logging and documentation standards for AI-driven trading. XAI layers translate opaque signals into defensible trade justifications for risk committees and regulators.

Fraud and AML detection: A 2025 IEEE study using SHAP and LIME with XGBoost reported a 38% increase in investigation speed and 12% fewer false-positive escalations. Separately, AML research applying a reproducible SHAP framework achieved PR-AUC of 0.741 with Random Forest versus 0.689 for MLP—reducing alert fatigue while supporting compliance reporting.

Regulatory Compliance and XAI Governance in Financial Services

Explainability obligations for financial institutions stem from multiple regulatory frameworks:

- GDPR Article 22 – Grants individuals the right to contest automated decisions that produce legal or similarly significant effects, including safeguards such as the right to human intervention. The European Data Protection Board endorses related guidance on explainability expectations.

- EU AI Act – Entry into force August 1, 2024; general application date August 2, 2026. High-risk AI systems—including those used for creditworthiness assessment—face transparency and documentation requirements that XAI directly supports.

- FCA guidance – FS23/6 outlines how existing regulatory frameworks apply to AI, focusing on safe and responsible adoption, transparency, and supervisory considerations for interpretability.

- US model risk management – OCC Bulletin 2026-13, issued jointly with the Federal Reserve and FDIC, introduces a principles-based, risk-tailored approach to model risk management. While it does not set prescriptive XAI standards, it raises the bar for model documentation, validation, and governance.

XAI provides the documentation and audit trail regulators increasingly expect: feature importance logs, decision justification records, and model monitoring outputs that demonstrate documented human oversight and accountability. BIS FSI Occasional Paper No. 24 confirms that explainability ranks among the top issues financial institutions raise when engaging regulators—making this documentation layer a practical necessity, not just best practice.

A critical distinction: Technical explainability (what the model did) is not the same as stakeholder explainability (why this decision was made in terms a compliance officer, auditor, or impacted party can understand). Firms must invest in both layers: technical outputs that engineers can validate, and business-friendly narratives that satisfy regulators and customers alike.

Building an XAI-Ready Risk Infrastructure

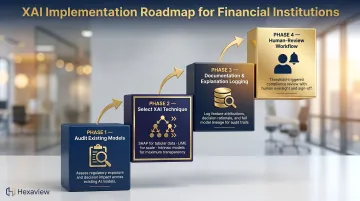

Moving from ad-hoc XAI experimentation to production-grade explainability requires four steps:

- Audit existing models – Identify which require explainability overlays based on regulatory exposure, decision impact, and stakeholder scrutiny

- Select the appropriate XAI technique – Match method to model type, data volume, and regulatory context (SHAP for tabular data, LIME for computational constraints, intrinsic interpretability for maximum transparency)

- Establish model documentation and explanation logging – Create structured records of feature attributions, decision rationale, and model lineage

- Build a human-review workflow – Trigger compliance or risk officer review when explanation outputs cross predefined thresholds or flag anomalies

Common Implementation Challenges

Computational cost of SHAP on large datasets – For tree ensembles with T trees, L leaves, and depth D, algorithmic complexity is O(T L D²). A benchmark on 581,012 rows and 54 features took approximately 4.6 hours on a 16 vCPU VM. KernelSHAP and baseline TreeSHAP became intractable beyond ~100k rows and 15 features under 16 GB memory. Distributed frameworks such as RAPIDS cuML offer GPU-accelerated alternatives, but require deliberate infrastructure planning before production deployment.

Risk of "explanation theater" – BIS FSI Paper No. 24 warns of risks including instability or unfaithfulness of explanations, potential to mislead, and human cognitive limits. XAI outputs generated for compliance box-checking but not operationally acted upon create false assurance without real risk mitigation.

Need for interdisciplinary teams – Effective XAI implementation requires collaboration among data scientists, risk officers, and legal or compliance specialists. 46% of firms report only partial understanding of AI models, often due to third-party reliance—highlighting the operational gap between generating explanations and interpreting them correctly.

Closing that gap requires teams where technical and domain expertise coexist from the start. Firms without in-house AI engineering capacity benefit from partners who embed both — Hexaview Technologies, for instance, pairs data scientists with capital markets specialists across its AI Engineering practice, backed by SOC 2 Type 2 certification and over a decade of FinTech delivery experience.

Frequently Asked Questions

What is explainable AI in the context of hedge fund risk management?

XAI refers to AI and ML models or post-hoc techniques that make automated risk predictions interpretable to humans. Quant teams, risk officers, and regulators can understand the reasoning behind a model's risk conclusion, not just the output it produces.

How does explainable AI differ from traditional black-box AI models in FinTech?

Black-box models optimize for predictive accuracy without exposing their internal logic, while XAI adds an interpretive layer—either through transparent model design or post-hoc methods like SHAP and LIME—that makes decision drivers visible and auditable.

What are the most effective XAI techniques for financial risk management?

SHAP is the most widely validated technique due to its theoretical properties and compatibility with any model type; LIME is useful for localized explanations at lower computational cost; intrinsically interpretable models like GAMs are preferred when regulatory scrutiny is highest.

Does adopting XAI require replacing existing AI models?

Most XAI techniques are post-hoc and model-agnostic, meaning they can be applied on top of existing deployed models without retraining. XAI adoption is typically additive — no full model rebuild required.

How does explainable AI help hedge funds meet regulatory compliance requirements?

XAI generates the documentation, audit trails, and decision justification records that regulators expect under frameworks like GDPR, the EU AI Act, and model risk management guidelines. This reduces compliance exposure without sacrificing predictive model performance.

Can explainable AI improve both accuracy and transparency simultaneously?

Yes. Applying post-hoc methods like SHAP to high-performing models such as XGBoost lets firms retain predictive accuracy while generating auditable explanations. The performance-vs-transparency trade-off is largely a constraint of older, intrinsically interpretable models — not a limitation of modern XAI practice.