Introduction

Alpha — returns above what the broader market delivers — has always been the defining challenge for hedge funds and asset managers. Today, that challenge is more acute than ever. As markets grow more efficient and data-rich, the window to capture alpha through traditional methods is narrowing fast.

Factor strategies like value and momentum, once the foundation of quantitative funds, have grown crowded and increasingly predictable — compressing returns for managers who still rely on them exclusively.

The industry has responded with a dramatic shift toward artificial intelligence and machine learning. According to the Alternative Investment Management Association (AIMA), 95% of fund managers now use Generative AI in their work — up from 86% just one year prior. More telling: 58% expect increased GenAI use in investment processes over the next year, signaling that AI/ML has moved from experimental curiosity to operational necessity.

This article breaks down not just which AI/ML tools are being deployed, but how they actually work to generate alpha: the specific stages, techniques, and practical tradeoffs involved. Whether you're a CIO evaluating build-versus-buy decisions, a PM curious about implementation realities, or an allocator assessing manager capabilities, this article delivers a concrete look at what's driving results in practice.

TL;DR

- Alpha generation with AI/ML spans a full pipeline — from data ingestion and feature engineering to signal generation and execution

- ML models detect non-linear patterns across thousands of variables simultaneously — something traditional quant models cannot do

- Core techniques include LSTMs, NLP/Transformers, Reinforcement Learning, and Generative AI for backtesting

- Primary failure modes: overfitting, signal decay from strategy crowding, black-box interpretability gaps, and data quality failures

- Production-grade AI/ML demands advanced ML engineering and deep capital markets expertise — neither alone is sufficient

What Is Alpha — and Why Traditional Methods Are Falling Short

Alpha is the return a fund earns above its benchmark or risk-adjusted expectation. It represents the value added by active management decisions rather than broad market exposure (beta). For decades, skilled managers extracted alpha through superior research, faster execution, or proprietary data access.

Today, alpha is becoming structurally harder to capture. As more capital flows into quantitative strategies and factor-based investing, classic signals like value and momentum have become crowded, reducing their predictive edge. AQR Capital Management founder Cliff Asness acknowledged in 2015 that as factor strategies become widely adopted, investors should expect "attractive but lower-than-historical rewards" going forward.

Recent events confirm this trajectory. MSCI research on summer 2025's quant fund wobble documented that unusual factor correlations and crowded trades drove losses for long-short quantitative strategies, demonstrating ongoing compression in traditional factor returns.

Three structural forces are squeezing traditional alpha generation:

- Signal crowding: Once a factor is published and adopted widely, its edge erodes

- Faster decay: Market participants now reprice patterns in weeks, not years

- Data parity: Proprietary advantages that once belonged to a few firms are now broadly accessible

AI and ML don't eliminate these pressures. They give managers tools to find patterns that are more complex, shorter-lived, and harder to replicate — the kind that fixed-rule models and human analysts cannot surface consistently. The real challenge is implementation: building systems that stay ahead of crowding rather than accelerating it.

How AI & ML Generate Alpha: The Core Process

AI/ML alpha generation is not a single action but a continuous pipeline. Each stage transforms raw information into progressively more refined investment signals, and the quality of each stage determines the reliability of the output.

Data Ingestion and Feature Engineering

The process begins with ingesting multiple data streams simultaneously. Structured data — price, volume, order book, financial statements — is combined with unstructured data including earnings call transcripts, news headlines, satellite imagery, credit card transactions, and web traffic data. The choice of data sources is itself a source of competitive advantage; proprietary or differentiated data increasingly separates winning strategies from crowded ones.

Feature engineering transforms raw data into model-ready inputs. This includes:

- Normalizing price returns and constructing cross-asset spreads

- Tagging sentiment scores from unstructured text sources

- Creating lag features that capture temporal signal patterns

- Validating data freshness to prevent stale feeds from corrupting downstream output

This stage requires both quantitative and domain expertise. Poor data quality at this stage corrupts every model that depends on it.

The alternative data market is projected to reach $135.72 billion by 2030, growing at a 63.4% CAGR, with hedge fund operators representing 68% of end-use demand. This explosion reflects the industry's recognition that traditional market data alone no longer delivers differentiated signals.

Model Training and Signal Generation

ML models are trained to identify statistical relationships between features and forward returns, operating across thousands of variables simultaneously and detecting non-linear dependencies that linear regression and rule-based models would miss. Unlike traditional quant models that rely on fixed factor definitions, ML systems monitor their own predictive accuracy and update signal weights as market regimes shift. When a factor stops working — as momentum strategies did during the March 2020 volatility spike — ML systems retrain and recalibrate automatically, rather than waiting for manual intervention.

Portfolio Construction and Execution Optimization

ML-derived signals are translated into portfolio decisions through optimization engines that determine position sizing, rebalancing frequency, and exposure constraints. Outputs like predicted return scores or risk probabilities feed into these engines, which balance expected alpha against transaction costs and risk limits.

Execution-layer ML minimizes market impact and slippage. Algorithms assess:

- Liquidity conditions and order book depth

- Historical market impact patterns

- Optimal trade timing and routing

- Real-time spread dynamics

Even a few basis points of slippage can erase modest alpha gains. Execution optimization is where theoretical edge becomes realized return.

Key AI/ML Techniques Hedge Funds and Asset Managers Deploy

LSTMs and Sequence Models for Time-Series Alpha

Long Short-Term Memory (LSTM) networks model price dynamics by detecting momentum patterns, regime shifts, and cross-asset dependencies across multiple lookback windows. Their edge over standard regression comes from one core capability: remembering relevant historical context while discarding noise — essential when recent patterns carry different predictive weight than distant history.

This makes LSTMs well-suited for high-frequency trading and regime detection, where the sequence and timing of price moves matter as much as the moves themselves.

NLP and Transformer Models for Alternative Data Signals

Large language models and attention-based architectures process earnings call transcripts, regulatory filings, news feeds, and social media to extract sentiment signals and detect changes in management tone before they show up in price.

Academic research published on SSRN demonstrates that NLP-based sentiment analysis applied to earnings call transcripts generates alpha not explained by traditional risk and return factors. J.P. Morgan operates a proprietary AI quant model that tracks corporate sentiment across dimensions including Federal Reserve policy, taxes, and buybacks — analyzing earnings call language to generate actionable investment signals.

What gives transformer models their edge here is context length: they process entire documents rather than isolated sentences, catching subtle tone shifts that surface across paragraphs — the kind of signal that gets lost when analysts skim hundreds of transcripts under deadline. Those sentiment signals, in turn, feed directly into portfolio allocation decisions, which is where reinforcement learning takes over.

Reinforcement Learning for Dynamic Portfolio Optimization

Reinforcement Learning (RL) agents learn optimal allocation and rebalancing policies through simulated trading environments, receiving reward signals based on risk-adjusted returns. They adapt strategies to different market regimes without requiring explicit rule-writing.

RL's core advantage is learning complex, multi-step decision processes — for example, holding a position through short-term losses when the expected long-term payoff justifies the drawdown. Traditional optimization assumes static relationships; RL continuously updates its policy as conditions shift.

Generative AI for Research Acceleration and Backtesting

Generative Adversarial Networks (GANs) create synthetic financial time-series data to stress-test strategies under hypothetical but plausible market conditions. This lets managers evaluate strategy robustness across scenarios that haven't occurred in historical data — directly addressing backtest overfitting, one of the primary causes of live trading failures.

Large Language Models (LLMs) accelerate research by summarizing thousands of documents, auto-generating code, and surfacing thematic signals. According to Goldman Sachs and OpenAI data, employees at companies with ChatGPT enterprise accounts save an average of 40 to 60 minutes per day, with 75% reporting they can now complete tasks they were previously unable to do at all.

The implementation challenge is getting these models into institutional-grade infrastructure — environments that meet SOC 2, handle real-time data pipelines, and integrate with existing risk systems. That's where capital markets engineering expertise determines whether research-stage models translate into live strategies.

Ensemble Methods and Gradient Boosting for Cross-Sectional Factor Research

Techniques like random forests and XGBoost rank securities by expected return in cross-sectional models, combining dozens of features in a way that is more robust to noise than any single signal. These approaches are standard in equity long/short and statistical arbitrage strategies, where the goal is identifying relative mispricings across a large universe of securities.

The practical advantage shows up in live trading: where single-model signals degrade quickly as markets adapt, ensemble predictions hold up longer because no single exploitable pattern dominates the output. Common configurations include:

- Combining momentum, value, and quality factors across 500+ securities simultaneously

- Using out-of-bag error estimates to tune feature importance without a separate validation set

- Stacking gradient boosting outputs with linear models to capture both nonlinear and linear relationships

Where in the Investment Lifecycle AI & ML Add the Most Value

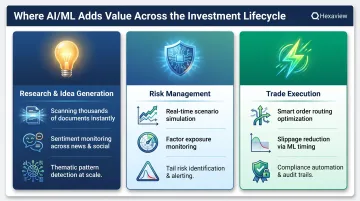

Research and Idea Generation

AI tools that scan thousands of documents, filings, and data feeds daily surface thematic investment ideas and anomalies faster than analyst teams, increasing the breadth of coverage without proportional headcount growth. The 40-60 minute daily time savings documented by Goldman Sachs translates to meaningful capacity expansion for research teams.

Beyond time savings, AI enables analysts to process information at a scale that was previously impossible. Capabilities that were once bandwidth-constrained now run continuously:

- Monitoring sentiment shifts across thousands of companies simultaneously

- Identifying thematic patterns across regulatory filings

- Detecting early signals of competitive disruption before they reach consensus

Risk Management and Portfolio Monitoring

ML models provide real-time risk assessment by simulating how macroeconomic scenarios, factor rotations, or liquidity shocks would impact portfolio exposures. That's a more dynamic and granular view than traditional VaR-based approaches, which rely on historical volatility and correlation assumptions that break down during regime changes.

AI-driven risk systems can identify emerging tail risks, monitor factor exposures continuously, and alert portfolio managers to concentrations that may not be visible through traditional risk decomposition methods.

Trade Execution and Operational Efficiency

From smart order routing to automated compliance checks, AI reduces the operational friction between signal generation and realized return. It helps firms scale their investment operations without linear increases in headcount or error rates.

Execution algorithms that learn from historical trade outcomes can adapt routing strategies to changing market microstructure, reducing information leakage and limiting market impact as liquidity conditions shift.

Risks and Limitations Hedge Funds Must Navigate

Overfitting and Model Decay

ML models trained on historical data can memorize noise rather than learn genuine market relationships, producing strong backtest results that fail in live trading. Even well-built models see their alpha erode as signals become crowded when more firms adopt similar approaches.

The August 2007 "quant quake" remains the canonical reference point. During the week of August 6, 2007, numerous quantitative long/short equity hedge funds experienced unprecedented losses. A simulated unleveraged contrarian strategy showed a 3-day loss of -6.85%, representing a 12-standard-deviation move. Goldman Sachs' Global Equity Opportunities Fund lost more than 30% in one week.

The cause: a liquidity-motivated forced liquidation that triggered cascading stop-loss and de-leveraging across similarly positioned funds, each running comparable factors and risk models.

The lesson is straightforward. When firms share ML training data and model architectures, apparent diversification can mask deep systemic crowding risk.

Black-Box Interpretability and Regulatory Exposure

Regulators and risk committees require clear rationale for investment decisions, but complex deep learning models often cannot explain why a signal was generated. This creates compliance risk, particularly in jurisdictions with increasing scrutiny over algorithmic trading.

The IOSCO consultation report on AI in capital markets (March 2025) and the FCA's multi-firm review of algorithmic trading controls (August 2025) signal that regulatory frameworks for AI-driven investment decisions are actively being developed. Firms must balance model sophistication with explainability requirements.

Data Quality and Security Risks

AI systems are only as reliable as the data they ingest. Stale, incomplete, or biased data produces flawed signals. Additionally, feeding sensitive portfolio data into unvetted or public-facing AI tools creates IP leakage and cybersecurity exposure that firms must actively manage.

Production-grade AI in capital markets demands rigorous controls across the full data pipeline:

- Data governance: enforce policies on what enters training and inference pipelines

- Lineage tracking: maintain auditable records of data sources and transformations

- Secure infrastructure: isolate sensitive portfolio data from public or unvetted AI tools

- Vendor vetting: assess third-party AI platforms for IP leakage and cybersecurity exposure before integration

Conclusion

AI and ML generate alpha not through any single model or technique, but through a structured pipeline that begins with better data and ends with more precise execution. The firms gaining lasting edge are those that have industrialized this pipeline while maintaining human oversight at critical decision points.

Building this capability requires both advanced ML engineering and deep capital markets domain knowledge working together. Neither alone is sufficient. ML engineers without financial markets expertise build technically impressive systems that miss critical market structure nuances. Domain experts without ML engineering capability struggle to translate investment insights into production-grade systems.

For hedge funds and asset managers looking to build or scale production-grade AI systems, the gap between research notebooks and live trading is rarely a modeling problem — it's an infrastructure and domain integration problem. Hexaview Technologies, recognized in the WealthTech 100 and with over a decade of capital markets expertise, specializes in exactly this: building production-grade data pipelines and AI systems with a SOC 2 certified delivery model that meets the security and compliance requirements financial firms actually face.

Adoption is no longer the question. Speed of execution is. The firms that move first to build the right infrastructure, governance, and human-in-the-loop workflows are the ones that convert models into consistent, risk-adjusted returns — before those signals get arbitraged away.

Frequently Asked Questions

Can AI generate alpha?

Yes, AI can contribute to alpha generation by identifying non-linear patterns and processing alternative data faster than human analysts. However, it does not guarantee alpha — model quality, data integrity, and market regime all affect real-world outcomes. Even sophisticated AI systems face signal decay as strategies become crowded.

How is AI used in hedge funds?

AI supports signal generation, automated research, portfolio optimization, risk monitoring, and execution. Practical use varies widely — quantitative equity funds apply different techniques than macro or credit-focused strategies.

How do hedge funds generate alpha?

Alpha is generated through superior information processing, better models, faster execution, or unique data access. AI/ML helps with all four by enabling faster analysis of more diverse data sources than traditional methods allow, while continuously adapting to changing market conditions.

What is a good alpha for a hedge fund?

Academic research suggests meaningful alpha is structurally rare. A study of 7,355 hedge funds found that only 7% generated statistically significant alpha, averaging approximately 1.2% annualized. Even modest consistent alpha (2-5% above benchmark) is considered meaningful when paired with low equity correlation.

What are the 4 types of ML?

The four types are supervised learning (prediction from labeled data), unsupervised learning (pattern discovery in unlabeled data), semi-supervised learning (combining both), and reinforcement learning (optimizing actions through trial and reward). In hedge fund contexts, supervised learning dominates signal generation while reinforcement learning is used for portfolio optimization.

What data sources do hedge funds feed into AI models?

Inputs span three tiers: traditional market data (price, volume), fundamental data (earnings, financials), and alternative data such as satellite imagery, credit card transactions, NLP-processed transcripts, and social sentiment. As traditional sources commoditize, proprietary and differentiated data is where real edge increasingly lives.