Introduction

Hedge funds and FinTech enterprises face a sobering reality: the firms deploying production-grade AI engineering are outperforming competitors by margins that were unimaginable just three years ago. 95% of hedge fund managers now use GenAI, up from 86% in 2024 — and 60% of institutional investors explicitly prefer allocating capital to funds with meaningful AI R&D budgets. Firms without production AI systems aren't just falling behind — they're losing allocations to those that have them.

Yet approximately 95% of generative AI pilots fail to deliver measurable P&L impact, according to MIT research. That gap — between adoption and production impact — comes down to one thing: off-the-shelf tools don't bend to proprietary fund strategies, compliance frameworks, or bespoke data architectures. Custom-built, production-grade systems do.

TLDR

- Hedge funds use production AI for quant research automation, real-time risk modeling, and alternative data pipelines at 1.5B+ ticks/day

- FinTech AI targets fraud ($5.75 total cost per $1 lost), credit for 45M underserved Americans, and AML false-positive reduction from the 90s to the 60s

- The 10-20-70 rule: algorithms are 10% of the problem; organizational integration is 70% — where most pilots break down

- Production partners need capital markets domain depth, SOC 2 Type 2 / ISO 27001 certification, and a track record in regulated environments

Why AI Engineering Has Become a Priority for Hedge Funds and FinTech

AI adoption in financial services has moved past the pilot stage. AIMA's survey of 150 hedge fund managers representing $788 billion in AUM found 95% now grant staff access to GenAI tools, with 58% expecting increased front-office integration over the next year — up from just 20% in 2023.

McKinsey confirms the broader shift: over 75% of organizations use AI in at least one business function, with 17% attributing 5% or more of EBIT to generative AI.

But adoption rates mask a more fundamental shift. What separates financial AI engineering from generic enterprise AI is the operational constraint set:

- Low-latency execution: Hedge funds running high-frequency strategies measure model inference in microseconds. Real-time risk models must process live portfolio positions, market data, and macro signals fast enough to drive intraday decisions — batch processing at end-of-day no longer meets the standard.

- High-accuracy models: In credit underwriting or fraud detection, false positives carry direct cost. AML systems at the industry norm of 95% false positive rates overwhelm compliance teams; best-in-class systems reduce false positives to the 60s using ML, creating operational leverage competitors cannot match.

- Regulatory compliance by design: Financial AI must meet SR 11-7 model risk management guidelines, FCRA/ECOA explainability requirements for credit decisions, and audit standards for algorithmic trading. Generic AI frameworks don't account for these constraints; domain-specific engineering does.

- Proprietary data integration: The edge rarely comes from the algorithm alone. Connecting Bloomberg market feeds, prime brokerage APIs, core banking platforms, and alternative data sources into a unified extraction pipeline is fundamentally an infrastructure engineering problem — and the firms that solve it at scale create signals their competitors can't replicate.

The firms building durable advantage are those treating AI as an engineering discipline — with the same rigor applied to latency, compliance, and data architecture that they bring to portfolio construction itself.

AI Engineering Use Cases for Hedge Funds

Quantitative Research and Alpha Generation

Man AHL, the systematic investment arm of Man Group, has been trading machine learning-based systems in multi-strategy portfolios since early 2014, processing approximately 1.5 billion data ticks per day. The firm collaborates with the Oxford-Man Institute of Quantitative Finance and reports that ML provides "greater signalling power" than individual traditional information sources by combining numerous weak signals into coherent predictive systems.

The real engineering challenge is the infrastructure beneath those models:

- Processes high-frequency data without introducing look-ahead bias in backtests

- Retrains models continuously as market regimes shift

- Integrates with backtesting frameworks to test dozens of strategies in parallel

- Maintains production reliability when capital is on the line

Funds that have invested in custom AI pipelines for alpha generation can iterate faster and exploit shorter-lived market inefficiencies. Two Sigma's 2026 AI in Investment Management outlook positions AI as central to systematic strategy development, and Bloomberg's analysis confirms that if AI can reliably generate alpha, early movers gain structural advantages.

Real-Time Risk Management and Scenario Analysis

For hedge funds with intraday position changes, end-of-day risk reports arrive too late. AI engineering enables systems that ingest live portfolio positions, market data, and macro signals to generate real-time risk metrics (VaR, CVaR, stress test scenarios) in seconds rather than overnight batch cycles.

The engineering requirements include:

- Streaming data pipelines that handle continuous market data feeds

- Low-latency model inference to compute risk metrics in seconds, not hours

- Integration with prime brokerage systems and institutional risk platforms like Axioma or Bloomberg PORT

These capabilities are already reaching production. SimCorp recently added AI-powered stress testing to Axioma Risk, enabling portfolio managers to describe scenarios in plain English and have the system automatically translate them into factor shocks. Legacy batch architectures could never support that kind of natural-language-driven risk analysis.

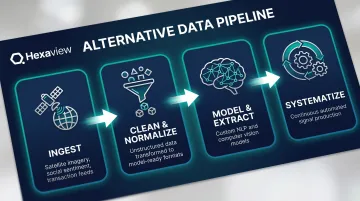

Alternative Data Ingestion and Signal Extraction

The alternative data market reached $2.5 billion in annual spending by 2024, with growth accelerating to 33% that year. Neudata's analysis of 2,338 datasets shows the market has expanded from 978 tracked datasets in 2020, and 53% of fund managers now use alternative data.

But access to datasets is commoditized. The competitive edge lies in engineering pipelines that:

- Ingest satellite imagery, web scraping, earnings call transcripts, credit card transaction feeds, and social sentiment data at scale

- Clean and normalize unstructured data sources into model-ready formats

- Build custom NLP models to extract sentiment from 10-Ks or computer vision models to count cars in retail parking lots

- Systematize signal extraction so it runs continuously, not as one-off research projects

Most funds have data licenses. Few have the engineering capacity to extract systematic, production-grade signals from those sources. Building that capacity — the pipelines, the models, the monitoring — is where the actual differentiation happens.

Regulatory Reporting and Compliance Automation

Regulatory obligations across SEC Form PF, AIFMD, MiFID II, and Dodd-Frank trade reporting create significant engineering burden. Operating costs spent on compliance have increased by over 60% for financial institutions compared to pre-financial-crisis levels.

The SEC planned to finalize 21 new regulations in 2024 alone — that pace shows no sign of slowing.

AI engineering can automate:

- Extraction, validation, and formatting of required disclosures from trade data and portfolio systems

- Generation of investor letters and regulatory summaries, freeing up senior staff time

- Real-time surveillance for insider trading patterns, wash trading, and other compliance risks

Hedge funds are already deploying GenAI to auto-generate investor letters and summarize regulatory documents, reducing manual administrative work and lowering the risk of reporting errors.

AI Engineering Use Cases for FinTech Enterprises

Fraud Detection and Financial Crime Prevention

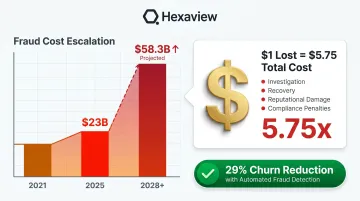

Modern fraud detection requires AI models that process thousands of transactions per second, identify subtle behavioral anomalies, and adapt in real time to evolving attack patterns. Rule-based systems cannot meet this standard. LexisNexis reports that every $1 lost to fraud now costs US financial institutions $5.75, up 25% from $4.00 in 2021. Organizations using automated fraud detection systems reduced customer churn by 29% compared to manual processes.

Custom AI engineering builds ensemble models combining:

- Graph neural networks to detect collusion rings and synthetic identity fraud

- Anomaly detection algorithms that flag deviations from normal behavioral patterns

- Behavioral biometrics to distinguish legitimate users from account takeovers

These systems must be tuned to each institution's specific transaction mix, risk tolerance, and customer base — a level of customization that off-the-shelf SaaS platforms cannot provide. With fraud losses projected to climb from $23 billion in 2025 to $58.3 billion globally, institutions that delay custom model deployment will find themselves absorbing losses that generic tools were never designed to catch.

AI-Driven Credit Scoring and Underwriting

Approximately 45 million American adults are credit-invisible or unscorable, with 26 million having no credit record at all and 19.4 million having records too thin to generate a FICO score. AI engineering enables FinTechs to build alternative credit models that incorporate:

- Bank transaction history and cash flow patterns

- Utility and rent payment data

- Behavioral signals from application behavior and engagement

These models expand credit access and improve default prediction accuracy — particularly for neobanks serving thin-file populations — but the gains only hold if the models can explain their decisions to regulators. Explainability is the core engineering constraint:

- Fair Credit Reporting Act (FCRA): Requires adverse action notices specifying reasons for denial

- Equal Credit Opportunity Act (ECOA): Prohibits discrimination and mandates explanation of adverse decisions

- SR 11-7 Model Risk Management: Requires validation, documentation, and governance for credit models

FinTechs cannot deploy pure black-box models. Interpretable approaches — SHAP values, decision trees, linear models with clear feature attribution — are required. Building them correctly demands engineers who understand both ML architecture and financial regulation simultaneously.

Intelligent Customer Experience and Hyper-Personalization

Financial services companies using personalized marketing increase revenue by more than 30%, according to BCG. FinTech enterprises are deploying AI to:

- Personalize financial product recommendations based on transaction behavior and life events

- Proactively surface insights, such as flagging unusual spending or predicting cash flow shortfalls

- Power conversational interfaces for banking and investment apps

Consumers now rank financial guidance as a top banking priority, with 52% identifying it as essential. Synovus, a US-based financial services company, deployed over 60 unique AI-driven insights with 20% of digital users engaged, using personalization to drive deposit growth and deepen relationships.

Regulatory Technology and Automated Compliance

FinTech companies operating across multiple jurisdictions face escalating compliance complexity — KYC, AML, BSA, GDPR, PCI-DSS — that manual processes cannot scale with. The annual cost of financial crime compliance in the US and Canada is $61 billion, with 99% of institutions reporting cost increases.

AI engineering builds automated compliance pipelines that:

- Monitor transactions in real time against behavioral baselines

- Screen against sanctions lists and PEP databases with minimal latency

- Generate audit-ready documentation automatically

The critical improvement is in false positive reduction. McKinsey reports that many institutions run AML false positive rates in the high 90s, while best-in-class players using AI have reduced rates to the 60s. This represents enormous operational savings — fewer analysts reviewing false alerts, faster investigations, and lower regulatory risk. Globally, 81% of EMEA institutions plan to shift from rule-based engines to AI-driven solutions.

The 10-20-70 Rule and Why Financial AI Projects Need Expert Engineering

The MIT NANDA Initiative found that approximately 95% of generative AI pilots fail to deliver measurable P&L impact. Only about 5% achieve rapid revenue acceleration. McKinsey confirms the gap: while 88% of companies use AI, only 39% can trace actual financial benefit to their initiatives.

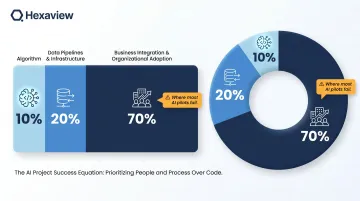

The 10-20-70 rule explains why:

- 10% of effort goes into the algorithm itself

- 20% of effort goes into data pipelines and model infrastructure

- 70% of effort goes into business integration, process redesign, and organizational adoption

Most financial institutions over-invest in algorithms and platforms (spending roughly 80% of their AI budget on tools) while underinvesting in the organizational work that determines production success.

For hedge funds and FinTech, this gap is especially costly. A risk model that runs in a research notebook is not the same as one that runs reliably in production, connected to live data feeds, with proper monitoring, failover logic, and audit logging. This is the engineering gap that separates proof-of-concept from real business value.

What the 70% requires in financial services:

- Data engineering: Building pipelines that handle market data feeds, alternative data sources, and legacy system integration

- MLOps infrastructure: Deploying model versioning, continuous retraining, A/B testing frameworks, and performance monitoring

- System integration: Connecting AI models to OMS/EMS platforms, prime brokerage APIs, core banking systems, and risk platforms

- Governance frameworks: Implementing model risk management (SR 11-7), audit trails, explainability mechanisms, and compliance documentation

The 70% — change management and integration — is where domain expertise in financial services becomes critical. An AI engineering partner with hands-on capital markets experience — one who knows trading system constraints, regulatory obligations, and institutional data architecture — can cut months off this phase that a generalist technology firm cannot.

What to Look for in an AI Engineering Partner for Financial Services

Domain Expertise in Capital Markets or FinTech

Generic software engineering skills cannot substitute for domain expertise in financial services. The right partner should have proven experience with:

- Financial data infrastructure: market data feeds, OMS/EMS integration, core banking APIs

- Regulatory compliance requirements: SR 11-7, FCRA, ECOA, MiFID II, Dodd-Frank

- Performance and reliability standards of production financial systems

Hexaview brings more than 10 years of experience in capital markets and wealth management, with recognition on the WealthTech 100 list and work with clients like LPL Financial and Addepar. That depth of domain fluency matters: most AI initiatives in financial services fail not from weak models, but from misalignment with the regulatory, data, and operational realities of the industry.

Security and Compliance Non-Negotiables

For any AI partner working with financial data, independently validated security practices are baseline requirements:

- SOC 2 Type 2 certification: Validates controls across security, availability, processing integrity, confidentiality, and privacy — required by most institutional clients before contract execution

- ISO 27001 certification: The international standard for information security management systems, relevant for cross-border engagements and global institutional mandates

- Private or hybrid cloud deployment capability: Many financial institutions cannot use public cloud for sensitive data; partners must support on-premises or hybrid architectures

Financial institutions should treat these certifications as minimum requirements, not selling points. Any AI partner without independently audited security controls introduces risk that no project outcome justifies.

Technical Depth and Collaborative Delivery Model

The best AI engineering partners bring both technical depth and a collaborative approach — embedding with the client's quant, data science, and technology teams to build systems the internal team can own and evolve.

Hexaview's client engagements have produced a 97% rise in data accuracy and over 20,000 man-hours saved through automation and process optimization. Those numbers come from embedding directly with client quant and engineering teams — not from handing off a finished product, but from building systems the internal team can operate and extend independently.

Frequently Asked Questions

What are the use cases for AI agents in enterprise?

AI agents automate repetitive workflows in IT, HR, and compliance, extract insights from unstructured data, and execute real-time decisions such as fraud alerts, trade surveillance, and credit approvals. In financial services, AI agents now run core risk management and customer engagement workflows at leading institutions.

What is the 10-20-70 rule for AI?

The 10-20-70 rule describes how only about 10% of an AI project's effort lies in the algorithm, 20% in data and model infrastructure, and 70% in business integration and change management. Ignoring the 70% is why most AI pilots in finance never reach production.

How is AI specifically used in hedge funds?

Hedge funds use AI for quantitative strategy development, real-time risk analytics, alternative data processing, and compliance automation. Leading funds have shifted from using AI as a research aid to running it continuously across trading, risk, and operations.

What compliance and security requirements apply to AI in financial services?

Financial institutions using AI must navigate SEC rules on algorithmic systems, AML/BSA obligations, model risk management guidelines (SR 11-7), and data privacy regulations. Partners must hold certifications like SOC 2 Type 2 and ISO 27001, and AI models must meet explainability requirements for credit or compliance decisions.

What is the difference between AI tools and AI engineering services for FinTech?

AI tools (pre-built SaaS platforms) deploy quickly but offer limited customization. AI engineering services build systems tailored to a firm's data architecture, regulatory environment, and business logic — which is why hedge funds and regulated FinTechs typically need custom builds rather than off-the-shelf solutions.

How long does it typically take to implement a custom AI engineering solution for a hedge fund or FinTech?

A focused use case like fraud detection can move from scoping to production in 8-16 weeks. Broader builds involving multiple data sources and regulatory layers typically take 6-12 months. Infrastructure readiness and organizational alignment — not model development — are what drive the timeline.