Introduction

Wealth management and FinTech leaders face a strategic paradox. AI promises real efficiency gains: faster onboarding, streamlined compliance workflows, and advisor productivity improvements that can reshape operations. Yet deploying AI without proper governance in a fiduciary environment creates regulatory exposure, audit failures, and client trust risks that far outweigh any speed advantage.

Most firms are stuck between two failure modes. Some move fast, deploying ungoverned AI pilots that boost efficiency but create compliance blind spots. These organizations excel at innovation but struggle when regulators ask, "Why did your system make that decision?" Others move too cautiously, suffocated by compliance reviews that delay every experiment while competitors who've solved the governance puzzle compress cycle times by 30-40%.

This article addresses the governance-innovation tension head-on. It covers:

- Why governance is non-negotiable in wealth management and FinTech

- Which automation use cases deliver measurable value

- What "governed AI automation" actually means — a two-layer framework covering how systems think and what they're allowed to do

- Common deployment challenges and a practical roadmap from pilot to production

TLDR

- Two-thirds of financial institutions use AI, but fewer than 25% have mature governance frameworks

- Top use cases: KYC/AML, advisor productivity, regulatory reporting, and fraud detection

- Governed AI requires two layers: how systems think (AI governance) and what they can do (execution governance)

- Start assistive, then graduate workflows only after validated performance with full audit trails

Why Governed AI Automation Is a Strategic Imperative for Wealth Management and FinTech

Wealth management and FinTech firms operate under a higher governance bar than most industries. Three factors converge to make AI governance non-negotiable:

Fiduciary duty and regulatory oversight. Firms are accountable to SEC and FINRA supervision, manage sensitive data for high-net-worth clients, and execute financial actions that are often irreversible. A single compliance failure doesn't just trigger fines—it can result in nine-figure regulatory consequences. The SEC charged Charles Schwab's robo-adviser subsidiaries $187 million in 2022 for misleading clients about algorithmic investment advice, proving that regulators will enforce existing fiduciary obligations against AI-driven tools.

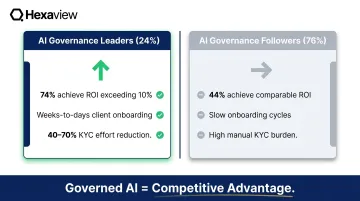

The governance gap is real. Two-thirds of EMEA banks and insurers now use AI or machine learning models, yet more than 50% cite transparency and explainability as unresolved hurdles. Only 24% of organizations globally qualify as AI governance "Leaders" with mature frameworks—meaning three-quarters are deploying AI without the governance infrastructure to survive a regulatory examination.

Competitive pressure is intensifying. Firms that have solved governance aren't just compliant—they're faster. Pioneers report 74% achieving ROI exceeding 10% on advanced AI initiatives, compared to 44% of followers. They compress client onboarding from weeks to days, reduce KYC effort by 40-70%, and free advisors to focus on high-value client relationships rather than administrative tasks.

Governed AI automation means systematically redesigning how decisions are made, evidence is captured, controls operate, and humans supervise intelligent systems, with every step auditable. This goes well beyond RPA or bolt-on chatbots.

Firms that achieve this don't just pass audits—they move faster, onboard clients sooner, and reduce operational costs because their workflows were built for intelligent systems rather than retrofitted around them.

High-Value AI Automation Use Cases for Wealth Management and FinTech

The greatest value from AI automation comes from end-to-end workflows where multiple steps — document intake, verification, decision support, communication, and evidence capture — are governed by a single orchestrated system. Hexaview has delivered such solutions for clients including LPL Financial and Addepar, drawing on over a decade of capital markets and wealth management expertise.

Client Onboarding and KYC/AML Automation

Banks commonly assign 10-15% of their full-time equivalents to KYC and AML operations alone, yet automation rates remain only 10-20% even at institutions that have invested heavily in technology. Financial institutions filed 4.7 million Suspicious Activity Reports in FY2024 — an average of 12,870 per day — demonstrating the unsustainable manual workload.

AI agents can:

- Extract identity data from onboarding documents (passports, driver's licenses, utility bills)

- Calculate risk scores based on regulatory logic and client attributes

- Apply completeness validation to flag missing documentation

- Detect anomalies requiring human review

- Generate a defensible audit trail capturing every extraction, calculation, and decision

This reduces manual KYC effort by 40-70% while strengthening compliance documentation quality, since every step is logged and traceable.

Advisor Productivity and Portfolio Intelligence

Financial advisors using AI save 3-5 hours per week on document review, research summarization, and compliant communication drafting. Over the next decade, advisor productivity improvements of 10-20% through AI and technology are essential to address a projected shortage of 100,000 advisors by 2034.

AI can analyze a client's complete financial picture — risk tolerance, goals, behavioral history, existing holdings — to:

- Suggest portfolio adjustments based on current market conditions and client objectives

- Summarize relevant market research and economic reports

- Draft personalized client communications

- Surface regulatory alerts or compliance flags tied to client accounts

All outputs require human advisor review before any action is taken, ensuring judgment and client relationships remain advisor-led.

Regulatory Reporting and Compliance Monitoring

High-volume compliance reviews are one of the costliest drains on analyst time. AI addresses this by automating evidence packaging for audit trails and triaging AML alerts — so instead of manually sifting through thousands of alerts, analysts receive structured summaries with:

- The reasoning and entity context behind each flag

- Transaction-level detail relevant to the review

- Recommended dispositions, with human approval required before any action is finalized

The stakes justify the investment. The financial industry detects only approximately 2% of global financial crime flows despite increasing compliance spending by up to 10% annually from 2015-2022. Better tooling, not more headcount, is the lever.

Fraud Detection and Transaction Monitoring

Rules-based systems miss the behavioral patterns that distinguish sophisticated fraud from normal activity. 77% of anti-fraud professionals report increases in deepfake social engineering, yet only 7% say their organizations are "more than moderately prepared" to detect AI-fueled fraud.

AI-powered fraud detection:

- Scores transactions based on behavioral anomalies and historical patterns

- Summarizes the reasoning and context behind each flag

- Feeds structured investigation summaries directly into human review queues

- Maintains full traceability from detection through resolution

Client Communications and Document Generation

Generative AI drafts regulatory notices, advisor reports, and client-facing communications tied to approved content libraries and policy sources. Every prompt, retrieved source, and final output is logged as part of the case record, with required structured justification for discretionary language and mandatory review before distribution.

What "Governed AI Automation" Actually Means: The Two-Layer Framework

Effective governed AI automation requires two distinct and complementary layers of oversight. Most firms implement only the first layer and expose themselves to execution-level risk.

AI Governance: How Systems Think

AI governance in wealth management and FinTech ensures models are developed and deployed transparently. This includes:

- Documented model behavior - what the model does, what data it uses, how it was trained

- Data quality standards - validation, lineage tracking, and version control

- Bias management processes - testing for fairness and discriminatory outcomes

- Explainability requirements - clear documentation of "why" behind every AI-assisted decision

- Human escalation mechanisms - defined thresholds for routing to human review

- Lifecycle monitoring - ongoing performance tracking and model retraining schedules

Regulators examining AI-assisted decisions need clear answers. Black-box models that cannot document inputs, policy sources, and approval workflows will not survive a compliance examination.

The Federal Reserve's revised Model Risk Management guidance (SR 26-2) requires comprehensive model inventory, validation (conceptual soundness and outcomes analysis), and ongoing monitoring. Notably, GenAI and agentic AI models are currently excluded as "novel and rapidly evolving" — a gap firms should not treat as a pass.

FINRA Regulatory Notice 24-09 makes the principle explicit: all existing FINRA rules apply to GenAI just as to any other technology. Firms must evaluate GenAI tools prior to deployment, ensure supervisory systems address technology governance and model risk management, and treat AI-generated communications the same as human-generated content under Rule 2210.

AI governance covers how systems think. The second layer governs what they're permitted to do.

Execution Governance: What Systems Are Allowed to Do

Execution governance is the runtime control layer. At the moment an AI system moves from analysis to action — executing a trade, initiating a payment, sending a communication, filing a report — governance must verify:

- Who authorized the action (human authority, role, scope)

- The limits of that authority (dollar thresholds, transaction types, client segments)

- Whether the action remained within those limits (policy enforcement at runtime)

This is critical in financial infrastructure where many actions are irreversible. You cannot recall a wire transfer or unexecute a trade.

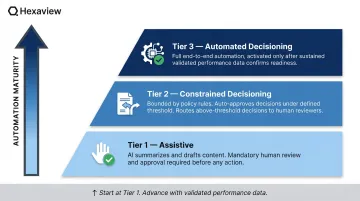

Tiered Automation Model:

A tiered model puts execution governance into practice:

- Tier 1 (Assistive) - AI summarizes, drafts, extracts, but mandatory human approval required before any action

- Tier 2 (Constrained Decisioning) - AI makes recommendations with policy rules and bounded actions (e.g., auto-approve transactions under $10,000; route higher amounts to human review)

- Tier 3 (Automated Decisioning) - Full automation, deployed only after sustained performance validation and monitoring maturity

Firms should start at Tier 1 and advance workflows only when documented performance data justifies the next tier.

Operational Controls:

Every governed AI workflow must include:

- Human-in-the-loop thresholds - route to review when confidence falls below a defined level (e.g., 85%)

- Segregation of duties - AI proposes; authorized human approves

- Policy traceability - outputs must reference approved sources or rules

- Full audit trail logging - every input artifact, extracted field, retrieved policy source, decision outcome, and model version

Hexaview's Agentforce-powered automation embeds both layers — AI governance and execution governance — directly into each workflow, so audit trails, approval thresholds, and policy traceability are active from the first deployment, not retrofitted later.

Common Challenges When Deploying AI in Regulated Wealth and FinTech Environments

Fragmented Legacy Systems and Siloed Data

Wealth management workflows often span multiple CRM platforms, portfolio management systems, custodians, and compliance databases that don't integrate cleanly. AI cannot produce reliable outputs when the data it needs is inconsistent across systems.

Firms must establish "golden sources" for client, account, and product data before automation can be trusted. More than 50% of asset and wealth management firms cite limited access to quality data as a primary hurdle, and approximately 50% lack basic processes to clean, normalize, and tag internal data.

Explainability Gaps

Regulators examining AI-assisted decisions need a clear answer to "why." The SEC's FY2025 Examination Priorities explicitly state that if advisers integrate AI into advisory operations (including portfolio management, trading, marketing, and compliance), the Division will examine whether firms have adequate policies to monitor AI tasks and whether representations regarding AI capabilities are fair and accurate.

The confidence gap is striking:

- Only 6% of anti-fraud professionals feel "completely confident" explaining how their AI/ML models make decisions

- Only 18% of organizations test AI models for bias or fairness

- Firms that retrofit explainability after a regulatory inquiry face far greater remediation costs than those who build it in from day one