The fund administration market is doubling—projected to expand from $9.8B in 2025 to $19.6B by 2034—but the operational model underpinning it cannot scale proportionally. Reconciliation alone accounts for 30%-40% of total back-office labor costs. PwC predicts 16% of asset managers will disappear by 2027 not because of market contraction, but because their manual operational infrastructure cannot keep pace.

Multi-agent AI represents the architectural shift that addresses this crisis. Unlike single-model AI that responds to prompts or rule-based automation that halts on exceptions, multi-agent systems deploy specialized AI agents that perceive context, delegate subtasks, adapt mid-workflow, and maintain complete audit trails. NemoClaw by Hexaview brings this capability specifically to capital markets, wealth management, and fund administration—automating reconciliation, investor reporting, and compliance workflows while preserving the governance and auditability that regulated financial environments require.

TLDR

- Multi-agent AI deploys specialized agents that collaborate: each holds distinct tools, a defined role, and its own reasoning capability

- Fund admins benefit most due to high-volume, multi-step processes where manual work consumes 30%-40% of back-office costs and error rates reach 40%

- Unlike single LLMs, multi-agent systems plan, delegate, and adapt autonomously while logging every decision for regulatory audit trails

- NemoClaw is Hexaview's NVIDIA-powered platform purpose-built for capital markets and fund services with over 10 years of domain expertise

- Key gains: 95% reduction in NAV preparation time, 97% improvement in data accuracy, and 20,000+ man hours saved in analysis

What Is a Multi-Agent AI System (And How Is It Different from a Single AI Model)?

A multi-agent AI system is a network of autonomous AI agents, each holding a specific role, tool access, and reasoning capability, working toward a shared goal rather than one model handling everything sequentially.

A single LLM responds to prompts and generates text. An agent built on that LLM goes further:

- Perception — reads live context from databases, APIs, and documents

- Planning — breaks complex tasks into discrete, executable steps

- Tool usage — runs API calls, database queries, and calculations

- Memory — tracks decisions across workflow stages

A multi-agent system coordinates multiple specialized agents like these to tackle workflows no single model could manage alone.

Fund operations make this concrete: one analyst working solo versus a specialized team where a data analyst pulls custodian feeds, a compliance officer validates FATCA requirements, and a portfolio reviewer cross-checks valuations — all in parallel, handing off completed work to the next stage.

Agents vs. Agentic AI

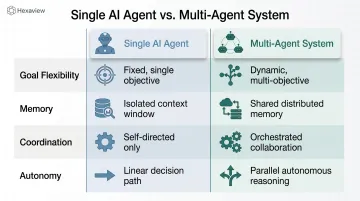

Single agents and multi-agent systems differ in more than scale. The table below captures the architectural distinctions that matter for fund administration workflows.

A single AI agent is a focused, autonomous module designed to execute specific tasks using external tools and sequential reasoning. A multi-agent system coordinates several such agents dynamically, distributing work across specialized roles with shared memory and structured handoffs.

| Dimension | Single AI Agent | Multi-Agent System |

|---|---|---|

| Goal Flexibility | Executes specific tasks | Decomposes and adapts goals dynamically |

| Memory | Optional cache | Shared episodic/task memory across agents |

| Coordination | Isolated execution | Hierarchical or decentralized coordination |

| Autonomy | High within tasks | Higher; manages multi-step complex workflows |

This architectural distinction matters in fund administration because NAV calculation, reconciliation, and regulatory reporting require coordination across data sources, validation rules, and approval checkpoints — work that a single sequential prompt cannot reliably handle.

Why FinTech and Fund Administrators Are Uniquely Positioned to Benefit

Structural Complexity Demands Orchestration

Fund administration is architecturally complex: multiple asset classes (equities, fixed income, derivatives, alternatives), multiple custodians (Fidelity, Schwab, LPL), prime brokers, and regulatory regimes (SEC, FINRA, AIFMD) all requiring synchronized data processing.

Single-point automation fails here because exceptions are the norm—pricing discrepancies, late trade confirmations, missing cost basis data. Multi-agent orchestration handles this by deploying specialist agents: one reconciles custodian positions, another validates pricing against market data feeds, a third flags discrepancies for human review, and a coordinator synthesizes results.

The Volume-Accuracy Tradeoff

As AUM scales, the margin for error shrinks. Manual quarterly NAV calculation for mid-sized funds consumes 40-80 staff hours—data gathering, calculation, review, documentation. AI-driven workflows reduce this to under five minutes, making same-day NAV reporting operationally viable. Yet 40% of private funds contain material errors requiring restatement, according to Polibit's 2025 analysis. Each error discovered during audit triggers 20-40 additional hours of investigative work.

Multi-agent systems address this by running parallel validation: while one agent calculates NAV, another cross-validates against custodian records, a third checks fee accruals, and a fourth compares results to prior periods—all before human review.

FinTech Faces Parallel Challenges

FinTech firms building payment rails, lending platforms, or investment infrastructure face concurrent decision requirements that rigid rule-based systems cannot handle dynamically:

- Fraud detection: One agent monitors transaction streams, a second analyzes behavioral anomalies, a third cross-references AML watchlists, and a decision agent synthesizes findings

- Credit underwriting: Financial analysis, income verification, fraud screening, and policy compliance agents run concurrently before outputting a recommendation

- Real-time payments: Transaction validation, account balance checks, fraud scoring, and regulatory compliance checks must complete in milliseconds

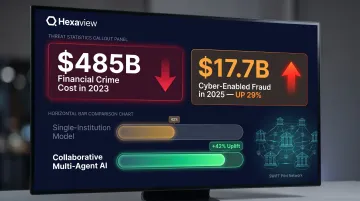

Financial crime cost the industry $485B in 2023. The FBI reported cyber-enabled fraud reached $17.7B in 2025—a 29% increase from 2024.

SWIFT's pilot with 13 international banks demonstrated that collaborative multi-agent AI **doubled fraud detection rates** compared to single-institution models.

Regulatory Scrutiny Requires Explainability

Fund admins operate under SEC, FINRA, and AIFMD oversight. Every automated decision must be explainable and auditable. Multi-agent systems with built-in reasoning logs meet this requirement architecturally—each agent logs its inputs, tool calls, and decision rationale in structured formats suitable for regulatory review.

Key reporting obligations leave little room for process failures:

- Form PF (large hedge fund advisers): Quarterly filing due within 60 days

- Form PF (liquidity fund advisers): Filing due within 15 days

- FATCA non-compliance: $10,000 failure-to-file penalties, rising to $50,000 for continued violations

Multi-agent architectures address this directly—processing at speed while preserving the full audit trail regulators require.

Legacy Workflows Halt on Exceptions; Agents Adapt

When a custodian feed is delayed, a multi-agent reconciliation system detects the gap, queries an alternate data source, flags the substitution in the audit log, and continues processing—rather than blocking the entire NAV cycle.

Legacy systems stop cold when pricing data is missing or a trade confirmation arrives late. Multi-agent systems evaluate context, reroute workflows, and escalate or resolve exceptions autonomously, logging every step for audit.

Key Use Cases: Where NemoClaw Multi-Agent AI Delivers Results for Fund Admins and FinTech

NAV Calculation and Reconciliation

Dedicated agents simultaneously:

- Pull pricing data from Bloomberg, Refinitiv, and custodian APIs

- Cross-validate positions against custodian records

- Calculate fees and expense accruals

- Flag discrepancies (missing securities, stale prices, position breaks)

- Generate reconciled NAV with complete audit trail

Multi-agent workflows reduce NAV preparation time by 95%—delivering calculations in under five minutes. Hexaview's data science engagements across financial services have achieved a 97% improvement in data accuracy and saved 20,000+ man hours in analysis.

Investor Reporting and Capital Activity Processing

Multi-agent systems handle:

- Subscription/redemption validation (KYC checks, accredited investor status, fund capacity limits)

- Capital call and distribution notices (calculating pro-rata amounts, formatting communications, validating bank details)

- Investor statement generation (pulling performance data, calculating returns, applying fee schedules, formatting PDFs)

Each agent specializes—one validates data completeness, another formats output, a third runs compliance checks—before a final review gate approves output for delivery.

Real-world outcome: The Cynosure Group achieved 50% reduction in capital call processing time and 30% faster delivery of capital account statements using automated fund administration platforms, according to Dynamo Software case study.

Fraud Detection and Transaction Monitoring

Four specialized agents run in parallel across the transaction stream:

- Stream monitor tracks transaction flows in real-time, flagging volume and velocity shifts as they occur.

- Behavioral analysis detects anomalies—geographic inconsistencies, amount outliers, unusual timing patterns—against established baselines.

- Watchlist screening cross-references AML databases, OFAC lists, and international sanctions in milliseconds.

- Decision synthesis weighs the combined evidence, scores risk, and either auto-approves or escalates for human review.

SWIFT's pilot involving ten million test transactions demonstrated collaborative AI stopped fraud in minutes rather than hours or days—doubling detection rates versus single-institution models. Privacy-enhancing technologies enabled secure intelligence sharing across borders without exposing raw data.

Regulatory Reporting and Compliance Filing

Agents configured for Form PF, FATCA, CRS:

- Extraction agent: Pulls relevant data from ledgers, custodian feeds, investor records

- Mapping agent: Maps data fields to reporting templates (Section I fund structure, Section II portfolio composition, Section III counterparty exposure)

- Validation agent: Checks completeness, flags missing data, validates calculations

- Exception agent: Surfaces discrepancies for human review before filing

Compliance context: Form PF requires SEC-registered advisers with $150M+ AUM to report across multiple sections; large hedge fund advisers face quarterly deadlines within 60 days. CFPB guidance states creditors must specifically explain denial reasons—there is no special exemption for AI. Multi-agent logging satisfies this requirement by design.

Credit Risk Assessment and Loan Underwriting (FinTech)

For FinTech lenders and digital lending platforms, the same multi-agent architecture applies to credit decisioning. A parallel-agent model runs five functions simultaneously:

- Financial analysis agent: Calculates debt-to-income ratios, cash flow coverage, asset verification

- Income verification agent: Cross-references pay stubs, tax returns, bank statements, employment databases

- Fraud screening agent: Detects synthetic identity fraud (exposure reached $3.3B per TransUnion), first-party fraud (represents 36% of all reported fraud per LexisNexis)

- Policy compliance agent: Validates against lending policy rules, fair lending requirements

- Decision synthesis agent: Outputs recommendation with full reasoning trail

Parallel-agent underwriting achieves 50%–70% reductions in manual underwriting time and costs, according to Oscilar's analysis.

The Architecture That Makes Multi-Agent AI Work in Regulated Finance

Sequential vs. Hierarchical vs. Swarm Patterns

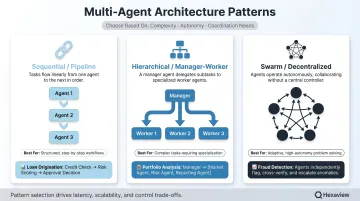

| Architecture | Structure | Best For | Financial Use Case |

|---|---|---|---|

| Sequential/Pipeline | Linear chain; output of one agent feeds the next | Workflows with strict step dependencies | NAV calculation pipeline: data ingestion → valuation → fee accrual → reconciliation → reporting |

| Hierarchical/Manager-Worker | Orchestrator delegates to specialist sub-agents | Complex processes requiring coordination and oversight | Regulatory reporting: compliance orchestrator delegates data extraction, validation, filing to specialists |

| Swarm/Decentralized | Agents collaborate as peers via shared memory without central manager | Research-heavy, multi-source synthesis tasks | Financial analysis combining earnings reports, market data, news sentiment, credit ratings |

Choosing the wrong architecture is one of the most common implementation mistakes. Here's when each pattern earns its place:

- Sequential works best for predictable, auditable processes where each step must complete before the next begins — trade confirmations, document processing, and similar linear workflows

- Hierarchical handles complexity better: use it when tasks require domain specialization and branching logic, such as loan underwriting or NAV processes with exception handling

- Swarm fits financial research and portfolio analysis, where the value comes from synthesizing insights across diverse, unstructured data sources — not executing a predefined workflow

Anti-pattern to avoid: Overloading a single agent with too many tools degrades both performance and auditability. Cap each agent at 5-7 tools maximum and delegate specialized functions to dedicated agents.

Key Infrastructure Components

Selecting the right architecture is only half the equation. The infrastructure underneath determines whether that architecture actually holds up under audit, scale, and real-time data pressure. Production-grade multi-agent systems in regulated finance require five core components:

LLM Selection

The model powering your agents shapes every downstream decision:

- GPT-4/Claude handle complex reasoning well; Claude is the stronger choice for regulated industries given its instruction-following fidelity and lower hallucination rates

- Llama 3 supports full on-premise deployment for air-gapped environments where complete data sovereignty is non-negotiable

- Agent frameworks like CrewAI and LangGraph allow model swapping without redesigning the entire system — useful as LLM capabilities evolve

Tool Integrations

Agents are only as useful as the systems they can reach:

- Database connectors (SQL, NoSQL, vector databases for memory)

- Custodian APIs (Fidelity, Schwab, LPL)

- Market data feeds (Bloomberg, Refinitiv, ICE)

- Document processors (PDF extraction, OCR)

- Calculation engines (NAV, performance attribution, risk metrics)

Shared Memory and Context

In regulated finance, agents can't operate with amnesia between workflow steps:

- Vector databases enable semantic search across historical decisions

- Episodic memory stores track workflow state across agents

- Context windows up to 200K tokens (Claude) or 1M tokens (Gemini) handle document-heavy workflows without truncation

Monitoring and Observability

Regulators require proof of what happened, not just what the output was:

- Structured logging of every agent action, tool call, and decision

- Real-time dashboards tracking workflow progress and exception rates

- Audit trail generation ready for regulatory review

Orchestration Frameworks

- CrewAI: Leading open-source framework with over 100,000 certified developers; combines collaborative intelligence with precise control

- LangGraph: Stateful graph-based orchestration for complex decision trees

- AWS Strands Agents SDK: Model-driven approach to building agents in minimal code; AWS published a specific financial analysis use case combining LangGraph and Strands

Security, Compliance, and Auditability in Multi-Agent Financial Systems

Critical Security Risks

Multi-agent financial deployments face specific threats:

Memory Poisoning: Malicious data injected into agent memory stores (vector databases, episodic memory) affecting downstream decisions. Example: fraudulent pricing data inserted into a reconciliation agent's knowledge base.

Tool Misuse: Agents tricked into calling payment APIs, data deletion commands, or unauthorized database queries through prompt injection or goal manipulation.

Cascading Errors: One agent's hallucination propagates through a chain. If a valuation agent miscalculates NAV and downstream reporting agents consume that figure without validation, investor statements reflect incorrect values.

OWASP-Aligned MAESTRO Framework

The MAESTRO (Multi-Agent Environment, Security, Threat, Risk, and Outcome) framework, published February 6, 2025 by the Cloud Security Alliance, provides a seven-layer threat model for agentic AI:

| Layer | Key Threats |

|---|---|

| 7 - Agent Ecosystem | Agent Tool Misuse, Agent Impersonation, Goal Manipulation, Marketplace Manipulation |

| 6 - Security & Compliance | Security Agent Data Poisoning, Evasion of Security AI Agents, Regulatory Non-Compliance |

| 5 - Evaluation & Observability | Manipulation of Evaluation Metrics, Poisoning Observability Data |

| 4 - Deployment & Infrastructure | Orchestration Attacks, Resource Hijacking, Lateral Movement |

| 3 - Agent Frameworks | Compromised Framework Components, Supply Chain Attacks |

| 2 - Data Operations | Data Poisoning, Data Exfiltration, Compromised RAG Pipelines |

| 1 - Foundation Models | Adversarial Examples, Model Stealing, Backdoor Attacks |

Cross-layer threats include Goal Misalignment Cascades, where goal misalignment in one agent propagates to others, triggering compounding errors across the entire pipeline.

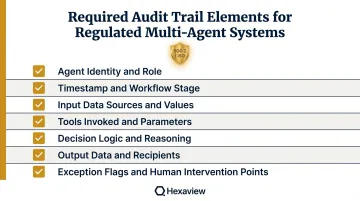

Auditability Requirements for Fund Administration

Every agent action, tool call, and decision rationale must be logged in structured, retrievable formats to satisfy regulatory review. Hexaview's SOC 2 Type 2 and ISO Information Security Management certifications reflect exactly this standard — built for environments where audit failures carry regulatory consequences.

Required audit trail elements:

- Agent identity and role

- Timestamp and workflow stage

- Input data sources and values

- Tools invoked and parameters passed

- Decision logic and reasoning

- Output data and downstream recipients

- Exception flags and human intervention points

96% of financial institutions are now adopting AI governance controls to manage these risks. Regulators are moving in parallel: the FCA's review into the long-term impact of AI on retail financial services examines how AI may reshape finance by 2030, signaling that audit readiness will only become more scrutinized.

Best Practices for Safe Deployment

Safe deployment in financial environments requires controls at every layer:

- Role-based access controls — reconciliation agents cannot execute payments; reporting agents cannot modify source data

- Human-in-the-loop checkpoints — large redemptions, suspicious transaction blocks, and regulatory filings require human sign-off before execution

- Encrypted inter-agent communications — messages encrypted in transit, shared memory stores encrypted at rest

- Quarterly red-team testing — simulate prompt injection, tool misuse, and memory poisoning attacks to validate that guardrails hold

How to Evaluate If Your Organization Is Ready to Deploy Multi-Agent AI

Three Readiness Indicators

1. API-Accessible, Well-Documented Data Sources

Agents are only as effective as the data they can query. Assess:

- Are custodian feeds, general ledgers, investor databases accessible via APIs or structured file formats?

- Is data quality documented (completeness rates, error frequencies, update latencies)?

- Do you have data dictionaries mapping field names, formats, and business rules?

If data is trapped in PDFs, spreadsheets, or legacy systems without APIs, data infrastructure modernization must precede agent deployment.

2. High-Volume, Repetitive Workflows Where Errors Are Costly

Identify 2-3 workflows where:

- Human time is disproportionately spent on manual tasks (reconciliation consuming 30%-40% of back-office labor)

- Error rates are measurable and material (40% of funds requiring NAV restatements)

- Process steps are documented and repeatable (not ad-hoc decisions requiring deep expertise)

Start with internal reconciliation or reporting workflows—lower risk, measurable outcomes—before deploying into client-facing or high-stakes contexts.

3. Internal Champions Who Define Success Metrics Before Deployment

Establish baseline metrics:

- Accuracy rates: Current error rates in NAV calculations, reconciliation breaks, compliance filings

- Cycle time: Hours required for quarterly NAV close, investor reporting, regulatory filing preparation

- Cost per transaction: Fully loaded labor cost for reconciliation, capital call processing, investor servicing

Without baseline metrics, demonstrating ROI is impossible.

Start Simple, Scale Deliberately

Once you've confirmed readiness across these three indicators, a phased rollout reduces risk and builds internal confidence:

Months 1-3 — Single-agent pilot: Deploy automation for one narrow workflow, such as custodian position reconciliation. Track accuracy, cycle time, and exception rates to establish proof of value.

Months 4-6 — Multi-agent orchestration: Expand to an end-to-end process like a NAV calculation pipeline. Introduce hierarchical coordination with defined human checkpoints at key decision stages.

Months 7-12 — Scale and integrate: Roll out across business units and connect to client-facing workflows. Remove human checkpoints where audit trails confirm consistent accuracy over time.

Where NemoClaw Fits Into This Framework

NemoClaw is Hexaview's NVIDIA-powered multi-agent AI platform for capital markets, wealth management, and fund services, built on over 10 years of capital markets domain expertise. Hexaview has been recognized in the WealthTech 100 and serves clients including LPL Financial and Addepar.

For fund admins and FinTech firms, NemoClaw removes the build-from-scratch burden. It provides:

- Pre-configured agent orchestration for fund operations workflows

- HexaShield compliance frameworks covering HIPAA, SOX, GDPR, KYC/AML, and SOC 2

- Domain-specific integrations for custodian APIs, market data feeds, and regulatory reporting templates

Frequently Asked Questions

What is a multi-agent AI system?

A multi-agent AI system is a network of autonomous AI agents, each with a specific role and tool access, that collaborate to complete complex tasks. Unlike a single LLM that responds to prompts alone, multi-agent systems coordinate specialist agents that perceive context, plan actions, use tools, and maintain shared memory across workflows.

How can agentic AI be used in banking?

Agentic AI covers a wide range of banking operations, including fraud detection, credit underwriting, transaction reconciliation, regulatory reporting, and customer service automation. Every decision comes with full reasoning logs—making outputs auditable and compliant by design.

What AI technologies are used in banking?

Banking deployments typically stack four technology layers:

- LLMs (GPT-4, Claude, Llama) as reasoning engines

- Orchestration frameworks (LangGraph, CrewAI, AWS Strands Agents) for agent coordination

- API/tool integrations for financial data sources such as custodian feeds, market data, and payment rails

- Vector databases for memory and context management across multi-step workflows

Is agentic AI the same as LLMs?

No. LLMs are reasoning cores that generate text based on prompts. Agentic AI adds goal-orientation, tool usage (API calls, database queries), persistent memory, and inter-agent coordination. This makes agent systems significantly more capable than standalone LLMs for complex operational workflows.

Which LLMs are best for agentic AI?

The right choice depends on your deployment requirements:

- Claude — strong instruction-following and low hallucination rates, preferred for regulated industries

- Llama 3 — ideal for on-premise deployments where complete data sovereignty is required

- GPT-4 — broadest ecosystem and tool integrations

Modern agent frameworks allow LLM swapping without redesigning the system, so selection can be driven by compliance needs rather than benchmarks alone.

What is SDK in agentic AI?

An SDK (Software Development Kit) in agentic AI refers to frameworks like LangGraph, CrewAI, or AWS Strands Agents that provide building blocks—agent templates, tool connectors, orchestration logic—needed to build and deploy multi-agent systems without coding everything from scratch. These frameworks handle inter-agent communication, memory management, and tool integration, allowing developers to focus on business logic rather than infrastructure.