Poor data quality costs organizations an average of $12.9 million annually, according to Gartner research. For wealth management, the stakes are even higher: fragmented data doesn't just slow operations—it creates compliance risks, erodes client trust, and makes AI adoption impossible. The average wealth management firm uses 8-12 different software solutions, with one-third of RIAs now working with multiple custodians, each delivering data in proprietary formats on different schedules.

This article covers what cloud platform integration for data services means in a financial context, why wealth management faces uniquely complex integration challenges, the highest-value use cases that justify investment, the architecture decisions that determine success, and how to evaluate integration partners who understand capital markets.

TLDR

- Cloud platform integration connects custodians, market data providers, CRM, and risk systems into a unified, governed data layer

- Wealth management integration differs from standard enterprise work — strict audit trails, sub-second latency demands, and heterogeneous data formats require a specialized approach

- High-impact use cases include portfolio aggregation, automated client reporting, compliance automation, and AI-powered analytics

- Any vendor shortlist should require capital markets domain expertise, SOC 2 Type 2 certification, and verified cloud-tier partnerships

- Hexaview Technologies brings 10+ years of capital markets experience to these integrations — recognized on the WealthTech 100 and DATATECH50 lists, with clients including LPL Financial and Addepar

What Is Cloud Platform Integration for Data Services in Wealth Management & FinTech?

Cloud platform integration for data services in financial services is the middleware or iPaaS layer that connects on-premises legacy systems and cloud applications through APIs, ETL pipelines, and event-driven architectures. This integration layer enables real-time or scheduled movement, transformation, and governance of financial data across the entire enterprise.

Financial data integration carries requirements that generic cloud tools aren't built for. Wealth management handles structured data (position files, NAV calculations, transaction records, account holdings) alongside unstructured inputs like client communications and research PDFs. Each type demands distinct transformation logic and compliance controls that general-purpose platforms don't provide natively.

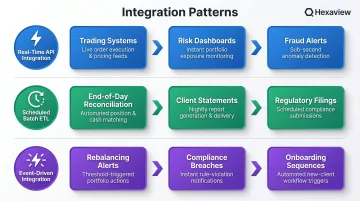

Three Integration Patterns Wealth Management Firms Need Simultaneously

Most financial services firms require all three patterns running in parallel:

Real-time API-based integration: Powers trading systems where latency is critical, live risk monitoring dashboards, and fraud detection alerts that require immediate action.

Scheduled batch ETL: Handles end-of-day reconciliation across custodians, monthly client statement generation, and regulatory filing preparation. Here, data completeness takes priority over speed.

Event-driven integration: Triggers automated workflows based on business conditions:

- Portfolio rebalancing alerts when drift exceeds defined thresholds

- Compliance breach notifications when trades violate firm policies

- Client onboarding sequences when new accounts are opened

Why Financial Data Integration Is More Complex Than Other Industries

Regulatory and Auditability Requirements

SEC Rule 17a-4 requires broker-dealers to maintain electronic records in non-rewriteable, non-erasable format with retention periods of 3-6 years depending on record type. MiFIR Article 25 mandates that investment firms keep all order and transaction data in machine-readable format for five years, including timestamps, client identifiers, and modification history.

This means integration architecture in financial services cannot rely on ad hoc data pipelines. Every data movement must include:

- Built-in data lineage tracking from source to destination

- Immutable audit logs recording every transformation step

- Role-based access controls limiting who can view or modify data

- Time-stamped records proving when data entered and left each system

Between FY2022 and FY2025, the SEC brought 95 enforcement actions resulting in $2.3 billion in penalties for book-and-record violations. Data traceability failures carry severe financial consequences.

Data Heterogeneity Across Dozens of Sources

The DTCC identified four structural pain points in financial market data: overlapping standards, missing metadata, siloed infrastructure, and lack of interoperability.

Wealth managers typically aggregate data from:

- Multiple custodians (Schwab, Fidelity, Pershing), each using proprietary CSV formats with different column orders

- Market data providers (Bloomberg, Refinitiv) delivering real-time quotes in XML or proprietary protocols

- Portfolio management systems (Addepar, Black Diamond, Orion) with different calculation methodologies

- CRM platforms (Salesforce Financial Services Cloud) requiring normalized household data

- Internal risk engines built on legacy infrastructure

Each custodian uses different terminology for the same concept. What Schwab calls "cash equivalents" might be "money market" at Fidelity and "sweep accounts" at Pershing. Integration architecture must map these variations to a single normalized schema without losing source-specific detail required for reconciliation.

Alternative investments add another layer of complexity—private equity and hedge funds lack standardized reporting formats and deliver performance data on random schedules rather than daily feeds.

Conflicting Latency Requirements Within the Same Firm

Financial services firms face a unique tension: some use cases demand real-time data while others require complete, verified batch data.

Real-time needs:

- Live risk monitoring calculating Value-at-Risk as market conditions change

- Intraday trading data for advisors making allocation decisions

- Fraud detection alerts flagging suspicious transactions within seconds

Batch needs:

- End-of-day NAV calculations requiring all trades to settle before processing

- Monthly client performance statements needing fully reconciled data

- Regulatory submissions where accuracy matters more than speed

Integration architecture must support both without one degrading the other. Running heavy batch reconciliation jobs during market hours can slow API response times for live risk dashboards; conversely, prioritizing real-time streaming can delay overnight batch processing windows.

Legacy System Coexistence

Many wealth management firms run portfolio accounting systems built in the 1990s alongside modern cloud platforms like Salesforce Financial Services Cloud. Finance teams spend up to 70% of their month-end close process on manual reconciliation because legacy systems cannot communicate with cloud platforms.

Integration must bridge on-premises infrastructure with cloud-native services without forcing a complete system replacement, a $10M+ capital expense most firms cannot justify. The architecture needs to handle:

- SFTP file transfers from legacy systems that don't support REST APIs

- Character encoding differences between mainframe outputs and cloud databases

- Timezone conversions when legacy systems run on server local time

- Data type mismatches when 30-year-old COBOL systems meet modern JSON schemas

Data Quality as a Compliance Issue

Those data-type mismatches and format gaps don't stay contained — in financial services, inaccurate data results in incorrect trade execution, misstated client accounts, or regulatory violations. The compliance stakes make data quality a first-order engineering concern, not an afterthought.

Manual processing of brokerage statements takes hours to days; AI-powered extraction tools can reduce this to under 10 minutes while improving accuracy. Integration pipelines need validation rules that catch errors before they reach downstream systems:

- Confirm portfolio holdings sum to reported account values

- Flag negative cash balances for immediate review

- Reconcile transaction counts across custodians and internal systems

Hexaview Technologies has achieved a 97% improvement in data accuracy through governed integration pipelines with automated validation — built on the same principles described throughout this section.

High-Impact Use Cases for Cloud Data Integration in Wealth Management

Unified Portfolio Data Aggregation

Integrating custodian feeds from Schwab, Fidelity, and Pershing into a single normalized data layer gives advisors real-time consolidated views of client portfolios. Without integration, operations teams spend hours daily reconciling position files manually, comparing Excel exports, and investigating discrepancies.

A properly designed aggregation layer:

- Normalizes security identifiers (CUSIP, ISIN, ticker symbols) across custodians

- Reconciles cost basis calculations that differ by custodian methodology

- Flags missing positions or unexpected holdings for review

- Provides a single API endpoint for downstream systems to query portfolio data

30% of investors want to view all their investments from various sources within a single application, according to PCR Insights research. Unified aggregation makes this possible.

Automated Client Reporting Pipelines

Connecting portfolio data, CRM records, and document generation tools through a cloud integration layer allows firms to automate personalized client performance reports. Instead of analysts spending days pulling data from multiple systems, formatting Excel spreadsheets, and generating PDFs, an automated pipeline:

- Extracts portfolio performance data from the aggregated data layer

- Pulls client communication preferences and household relationships from CRM

- Applies firm-specific branding and commentary templates

- Generates PDFs and routes them to client portals or email

Turnaround time drops from days to hours. Data entry errors disappear because reports pull directly from source systems without manual intervention.

Regulatory and Compliance Reporting Automation

The compliance data challenge is growing fast — the RegTech and compliance automation market was valued at $20.3 billion in 2024 and is projected to reach $72.4 billion by 2032.

Integrating trading systems, transaction records, and position data into a compliance reporting pipeline with built-in transformation rules enables automated generation of SEC, FINRA, or MiFID II submissions. The integration layer:

- Consolidates transaction data across all custodians and trading platforms

- Applies regulatory formatting rules specific to each submission type

- Generates complete audit trails showing data lineage from source to filing

- Archives submissions in immutable storage meeting SEC 17a-4 requirements

RegTech platforms typically cut compliance operations costs by up to 80% — a return that more than justifies integration investment.

Real-Time Risk Management and Market Data Integration

Streaming market data feeds from Bloomberg or Refinitiv into internal risk models through a real-time integration layer enables continuous VaR calculations, exposure monitoring, and automated breach alerts.

Overnight batch processing is the alternative — and by the time risk officers review morning reports, market conditions have already shifted. Real-time integration allows:

- Intraday VaR recalculation as positions and market prices change

- Immediate alerts when portfolio exposure exceeds risk limits

- Continuous stress testing against current market volatility

- Real-time compliance monitoring for concentration limits

AI and ML Analytics Pipelines

McKinsey reports that 62% of independent advisors intend to use AI for efficiency, with potential time savings of 20-30% per advisor. But AI models are only as good as the data feeding them.

Clean, integrated, real-time data is the prerequisite for:

- Client segmentation models identifying high-value prospects

- Predictive churn scoring flagging at-risk relationships

- Transaction anomaly detection catching fraud or operational errors

- Next-best-action engines recommending personalized advisor outreach

Firms that invest in integration infrastructure first see meaningfully better AI outcomes — models trained on clean, unified data produce churn predictions and segmentation scores that advisors actually trust and act on.

Building a Scalable, Compliant Cloud Integration Architecture for Financial Services

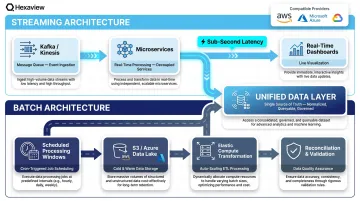

Real-Time vs. Batch Processing Architecture Design

In a mature financial services integration architecture, event-driven streaming and scheduled batch ETL must coexist within the same platform with clear design boundaries.

Streaming architecture:

- Separate compute resources dedicated to real-time processing

- Message queues (Kafka, AWS Kinesis) buffering market data feeds

- Microservices subscribing to specific event types (trades, alerts, breaches)

- Sub-second latency targets for critical workflows

Batch architecture:

- Scheduled processing windows during off-market hours

- Data lake storage (Amazon S3, Azure Data Lake) accumulating daily feeds

- Transformation jobs running on elastic compute that scales up overnight

- Reconciliation logic validating completeness before downstream use

AWS reference architecture for financial services demonstrates hybrid designs processing 103 billion data points in combined batch/streaming configurations with sub-10-second query performance.

API Management, Encryption, and Access Governance

All financial data movement must route through a secure API gateway with:

- TLS 1.2 or higher for data in transit - PCI DSS 4.0 Requirement 4 mandates strong cryptography whenever data traverses networks

- AES-256 encryption for data at rest - industry standard for stored financial data

- Token-based authentication - OAuth 2.0 or similar preventing credential exposure

- Role-based access controls - limiting who can call which APIs and view what data

- API call logging - every request logged with timestamp, user identity, and payload hash

SOC 2 auditors require every API call to be logged and attributable — this logging layer is what transforms a secure system into a demonstrably compliant one over time.

Elastic, Cloud-Native Infrastructure for Peak-Load Resilience

With API governance handling secure data movement, the infrastructure layer underneath must handle the volume that governance exposes. Cloud-native deployment on AWS, Azure, or GCP enables wealth management firms to scale pipeline capacity elastically during:

- Market volatility events when trade volumes spike 10x

- Quarter-end reporting cycles requiring all clients processed in 48 hours

- Client onboarding surges when acquisitions add thousands of accounts

Without elastic infrastructure, firms over-provision year-round — paying for peak capacity even when servers sit idle 90% of the time. Cloud-native architecture eliminates that waste by scaling up when demand spikes and scaling down when it doesn't.

Data Governance and Lineage as Architectural Requirements

In financial services, data governance cannot be a bolt-on feature added after integration is live. It must be embedded from day one.

A governed integration architecture tracks the full lineage of every data element:

- Source system - which custodian, market data provider, or internal platform

- Transformation applied - normalization rules, currency conversions, aggregation logic

- Destination - which database, report, or downstream system received the data

- Access history - who queried the data and when

When auditors ask "where did this number on the client statement come from?" lineage tracking provides a complete, documented answer — every source, every transformation, every downstream destination.

The same capability pays off operationally. When a portfolio value looks wrong, lineage tracing pinpoints exactly where the error entered the pipeline, cutting debugging time from days to hours.

Hexaview Technologies implements data lineage and access controls as standard architecture components on financial services engagements — not as optional add-ons — because clients like LPL Financial and Addepar operate in environments where auditability is non-negotiable.

What to Look for in a Cloud Integration Partner for Wealth Management

Domain Expertise in Capital Markets and WealthTech Comes First

A generic integration vendor without financial services experience will not understand:

- Custodian data formats and reconciliation requirements

- Portfolio accounting workflows and calculation methodologies

- Compliance implications of a failed data pipeline or missed audit trail

Look for partners with documented track records in wealth management and FinTech, including relevant industry recognitions. Hexaview Technologies has:

- 10+ years of capital markets experience building solutions for wealth management and asset management firms

- WealthTech 100 recognition - selected from over 1,300 evaluated companies as one of the world's most innovative WealthTech firms by FinTech Global's expert panel

- DATATECH50 recognition - named among the top 50 data management solution providers in financial services

- Proven delivery for LPL Financial and Addepar - demonstrating capability to work with leading wealth management platforms

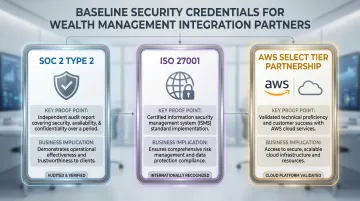

Security Certifications and Cloud-Tier Partnerships as Baseline Requirements

For any integration partner handling sensitive financial data, the following are minimum requirements, not differentiators:

| Credential | What It Proves | What It Means for You |

|---|---|---|

| SOC 2 Type 2 | Security controls operated effectively over 6-12 months | Verified API access logging, encryption in transit/at rest, and audit trail protection |

| ISO 27001 | Systematic Information Security Management System in place | Risk assessment processes and documented controls — not just a one-time audit |

| AWS Select Tier Partner | Minimum four accredited staff, documented customer engagements, certified AWS deployments | Technical depth to architect and deliver on AWS infrastructure at scale |

Hexaview Technologies holds all three credentials, meeting baseline security and technical requirements for wealth management engagements.

Scalability and Delivery Model

Beyond credentials, the right partner fits how your team actually works. Look for:

- Tech stack flexibility — works with Salesforce, custodian APIs, legacy on-premises systems, and modern cloud platforms without forcing unnecessary replacements

- Built-in scalability — integration architecture that handles 10x growth in AUM or client accounts without a full rebuild

- Collaborative delivery — takes continuous feedback, incorporates last-minute changes, and maintains transparent communication throughout the engagement

Hexaview operates a hybrid onsite-offshore model, pairing onsite leads with offshore development teams. This structure keeps projects on time and within budget while staying responsive as requirements evolve.

Frequently Asked Questions

What is cloud integration for data services?

Cloud integration for data services is the process of connecting cloud and on-premises systems to enable automated movement, transformation, and synchronization of data across platforms using APIs, ETL pipelines, or event-driven architectures. This gives organizations unified, up-to-date data for analytics, reporting, and operations.

Which cloud integration platform is best for data in wealth management?

The best platform depends on your data sources, compliance requirements, and latency needs. Wealth management firms should prioritize platforms or partners with domain expertise in capital markets, SOC 2 certification, and proven connectivity to custodian and market data systems— not generic enterprise integration tools built for other industries.

How does cloud platform integration support regulatory compliance in wealth management?

A well-designed integration layer embeds audit trails, data lineage tracking, and access logging at every transformation step. This makes it possible to demonstrate data provenance for regulatory submissions and respond to audits without manual reconstruction — satisfying SEC 17a-4, MiFID II Article 25, and GDPR Article 5.

What data sources are typically integrated in a wealth management cloud platform?

Most implementations connect a range of systems, each requiring standardized formatting and reconciliation:

- Custodian data feeds (Schwab, Fidelity, Pershing)

- Market data providers (Bloomberg, Refinitiv)

- Portfolio management systems (Addepar, Black Diamond, Orion)

- CRM platforms (Salesforce), risk engines, trading systems, and compliance reporting tools

What is the difference between real-time and batch data integration in financial services?

Real-time integration handles continuous data flows needed for live trading, risk monitoring, and fraud alerts where milliseconds matter. Batch integration handles scheduled, high-volume transfers used for end-of-day reconciliation, NAV calculations, and regulatory filings where completeness matters more than speed. Most wealth management firms need both.

How long does a cloud integration implementation typically take for a FinTech or wealth management firm?

Most implementations deliver initial production pipelines in 8-16 weeks with an experienced partner — versus 6-12 months for custom development from scratch. The final timeline depends on the number of data sources, transformation complexity, and compliance constraints. Partners with pre-built financial data connectors can cut that gap considerably.